Humans have dreamed about traveling to other star systems and setting foot on alien worlds for generations. To put it mildly, interstellar exploration is a very daunting task. As we explored in a previous post, it would take between 1000 and 81,000 years for a spacecraft to reach Alpha Centauri (of which Proxima Centauri is considered a companion) using conventional propulsion (or those that are feasible using current technology). On top of that, there are numerous risks when traveling through the interstellar medium (ISM), not all of which are well-understood.

Under the circumstances, gram-scale spacecraft that rely on directed-energy propulsion (aka. lasers) appear to be the only viable option for reaching neighboring stars in this century. Proposed concepts include the Swarming Proxima Centauri, a collaborative effort between Space Initiatives Inc. and the Initiative for Interstellar Studies (i4is) led by Space Initiative's chief scientist Marshall Eubanks. The concept was recently selected for Phase I development as part of this year's NASA Innovative Advanced Concepts (NIAC) program.

According to Eubanks, traveling through interstellar space is a question of distance, energy, and speed. At a distance of 4.25 light-years (40 trillion km; 25 trillion mi) from the Solar System, even Proxima Centauri is unfathomably far away. To put it in perspective, the record for the farthest distance ever traveled by a spacecraft goes to the *Voyager 1* space probe, which is currently more than 24 billion km (15 billion mi) from Earth. Using conventional methods, the probe accomplished a maximum speed of 61,500 km/h (38,215 mph) and has been traveling for more than 46 years straight.

In short, traveling at anything less than relativistic speed (a fraction of the speed of light) will make interstellar transits incredibly long and entirely impractical. Given the energy requirements this calls for, nothing but small spacecraft with a maximum mass of a few grams is feasible. As Eubanks told Universe Today via email:

"Of course, rockets are a common way to go fast. Rockets work by throwing “stuff” (typically hot gas) out the back, the momentum in the stuff going backwards equaling that in the velocity increase of the vehicle in the forward direction. The essence of rocketry is that it is only really efficient if the velocity of the stuff going backwards is comparable to the velocity you want to gain going forward. If it isn’t, if it is very much smaller, you just can’t carry enough stuff to gain the velocity you want.

"The trouble is that we have no technology – no energy source – that would enable us to throw out a lot of stuff at anything like 60,000 km/sec, and so rockets won’t work. Antimatter might conceivably enable this, but we just don’t understand antimatter well enough – and can’t make anywhere near enough of it – to make this a solution, probably for many decades to come."

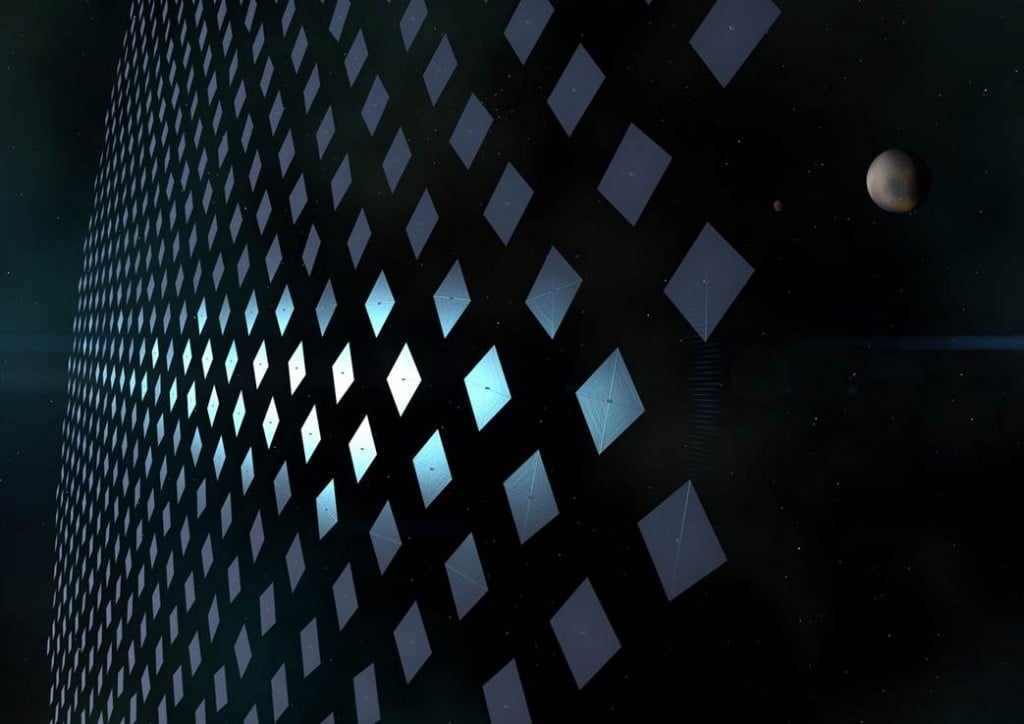

In contrast, concepts like Breakthrough Starshot and the Proxima Swarm consist of "inverting the rocket" – i.e., instead of throwing stuff out, stuff is thrown at the spacecraft. Instead of heavy propellant, which constitutes the majority of conventional rockets, the energy source for a lightsail is photons (which have no mass and move at the speed of light). But as Eubanks indicated, this does not overcome the issue of energy, making it even more important that the spacecraft be as small as possible.

"Bouncing photons off of a laser sail thus solves the speed-of-stuff problem," he said. "But the trouble is, there is not much momentum in a photon, so we need a lot of them. And given the power we are likely to have available, even a couple of decades from now, the thrust will be weak, so the mass of the probes needs to be very small – grams, not tons."

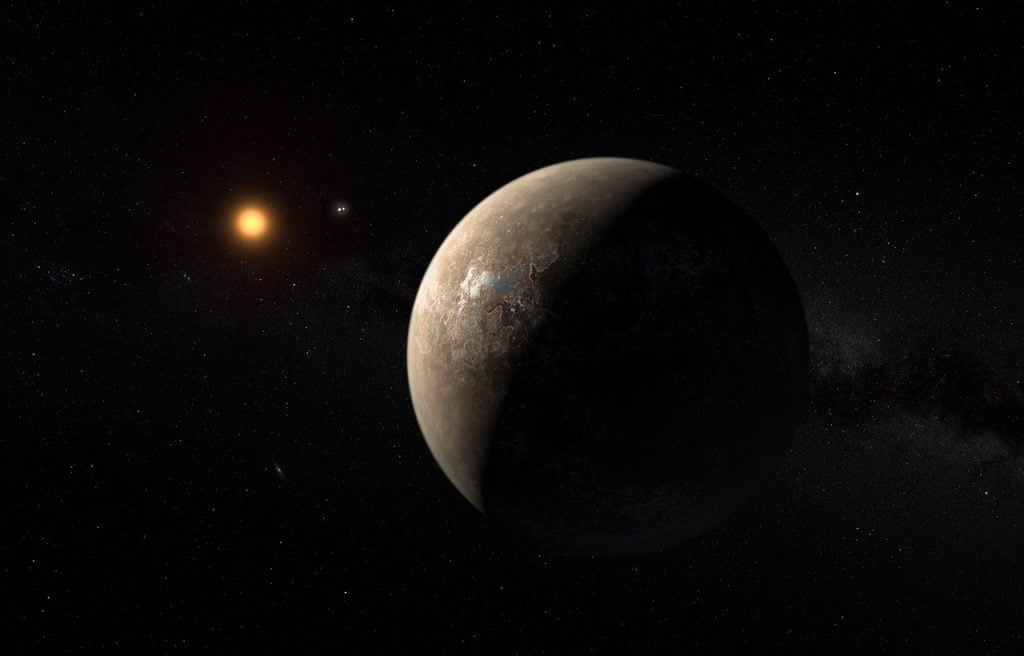

Their proposal calls for a 100-gigawatt (GW) laser beamer boosting thousands of gram-scale space probes with laser sails one at a time to relativistic speed (~10-20% of light). They also proposed a series of terrestrial light buckets measuring a square kilometer (0.386 mi2) in diameter to catch the light signals from the probes once they are well on their way to reaching Proxima Centauri (and communications become more difficult). By their estimates, this mission concept could be ready for development around midcentury and could reach Proxima Centauri and its Earth-like exoplanet (Proxima b) by the third quarter of this century (2075 or after).

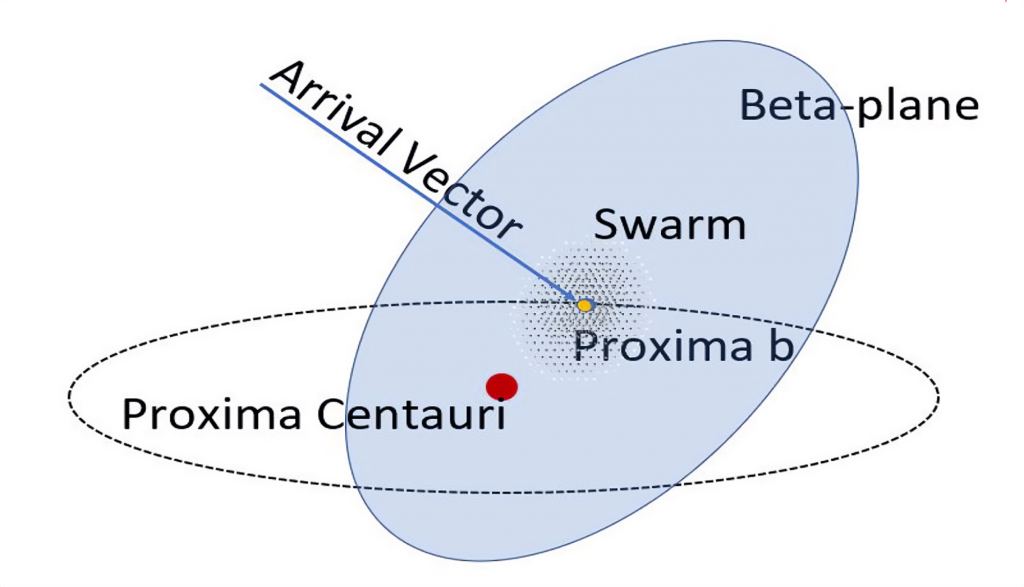

In a previous paper, Eubanks and his colleagues demonstrated how a fleet of a thousand spacecraft could overcome the difficulties imposed by interstellar travel and maintaining communications with Earth through swarm dynamics. "By raising the collecting area to a doable 1 km2and having many probes coordinate their sending, we can get a reasonable (if smaller) bit rate," he added. However, the eight-year round-trip lag on communications imposed by interstellar distances and General Relativity makes controlling the probes remotely from Earth impossible.

As such, the swarm must possess an extraordinary degree of autonomy when it comes to navigation (coordinating a thousand probes) and deciding what data is returned to Earth. While these strategies address distance, energy, and speed (at least for the time being), there is still the issue of how much it will cost to create the swarm and the associated infrastructure. The single greatest expense will be the laser array itself, whereas the gram-scale craft will be reasonably cheap to produce. As Eubanks told Universe Today in a previous article, their proposal can be developed with a budget of $100 billion.

But as Eubanks emphasized (then and now), the benefits of the mission architecture they've envisioned are legion, and the payoff of sending a swarm of probes to Proxima Centauri would be astronomical:

"The simple fact is that the cost of a laser-propelled interstellar mission, with light-weight probes and a huge laser system to propel them to the stars, will be dominated by capital costs – the costs of the laser system. The probes themselves will be pretty cheap by comparison. So, if you can send one, you should send lots. Clearly, sending a lot of probes brings the advantage of redundancy. Space travel is risky, and interstellar travel is likely to be especially risky, so if we send a lot of probes, we can tolerate a high loss rate. But we can do a lot more."

"We want to look for signs of biology and even technology, and so it would be good to get probes very close to the planet, to get good pictures and spectra of the surface and atmosphere. That will be tough for one probe, as we don’t know very well where the planet will be 24-plus years in the future. By sending a bunch of probes in a spread, at least a few should get close to the planet, giving us the close-up view we want."

Beyond that, Eubanks and his colleagues hope that the development of a coherent swarm of robotic probes will have applications closer to home. Swarm robotics is a hot field of research today and is being investigated as a possible means of exploring Europa's interior ocean, digging underground cities on Mars, assembling large structures in space, and providing extreme weather tracking from Earth's orbit. Beyond space exploration and Earth observation, swarm robotics also has applications in medicine, additive manufacturing, environmental studies, global positioning and navigation, search and rescue, and more.

While it could take many decades before an interstellar mission is ready to travel to Alpha Centauri, Eubanks, and his colleagues are honored and excited to be among NASA's selectees for the 2024 NIAC program. For them, the research took many years but is closer to realization than ever. "It's been a long time – almost a decade – and we feel honored to be selected," said Eubanks. "Now the real work begins."

*Further Reading: NASA*

Universe Today

Universe Today