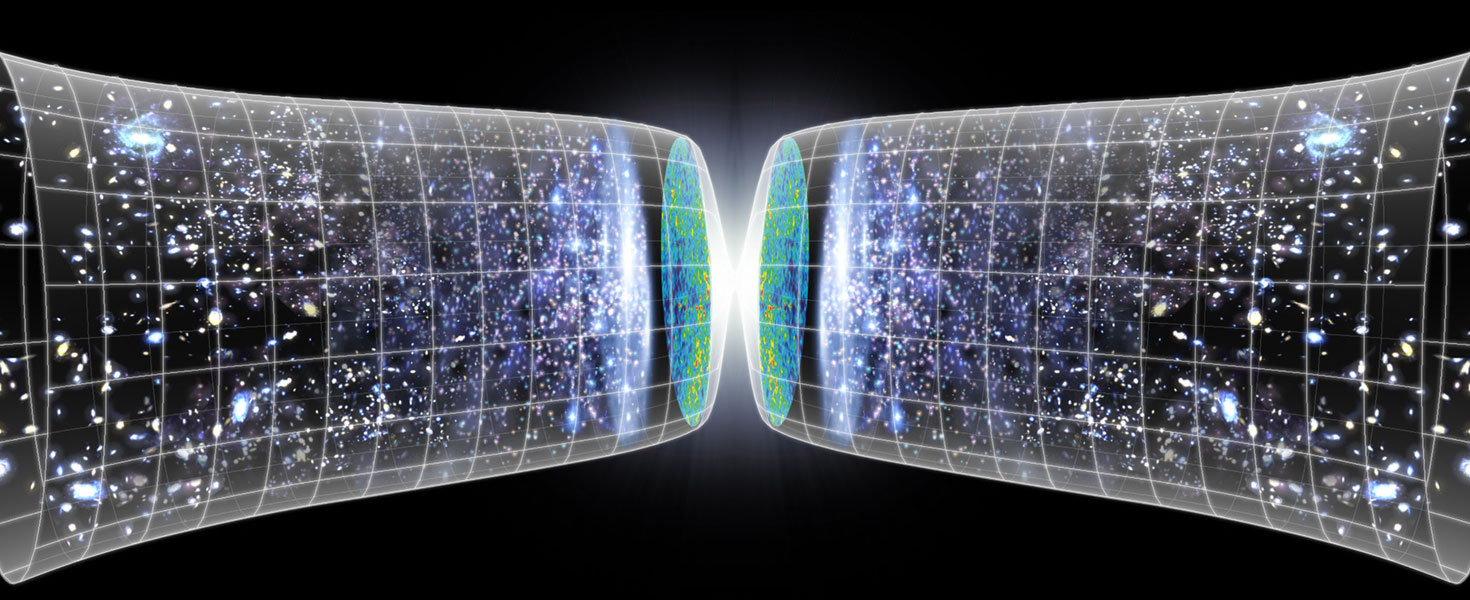

In 2011, the Nobel Prize in physics was awarded to Perlmutter, Schmidt, and Reiss for their discovery that the universe is not just expanding, it is accelerating. The work supported the idea of a universe filled with dark energy and dark matter, and it was based on observations of distant supernovae. Particularly, Type Ia supernovae, which have consistent light curves we can use as standard candles to measure cosmic distances. Now a new study of more than 1,500 supernovae confirms dark energy and dark matter, but also raises questions about our cosmological models.

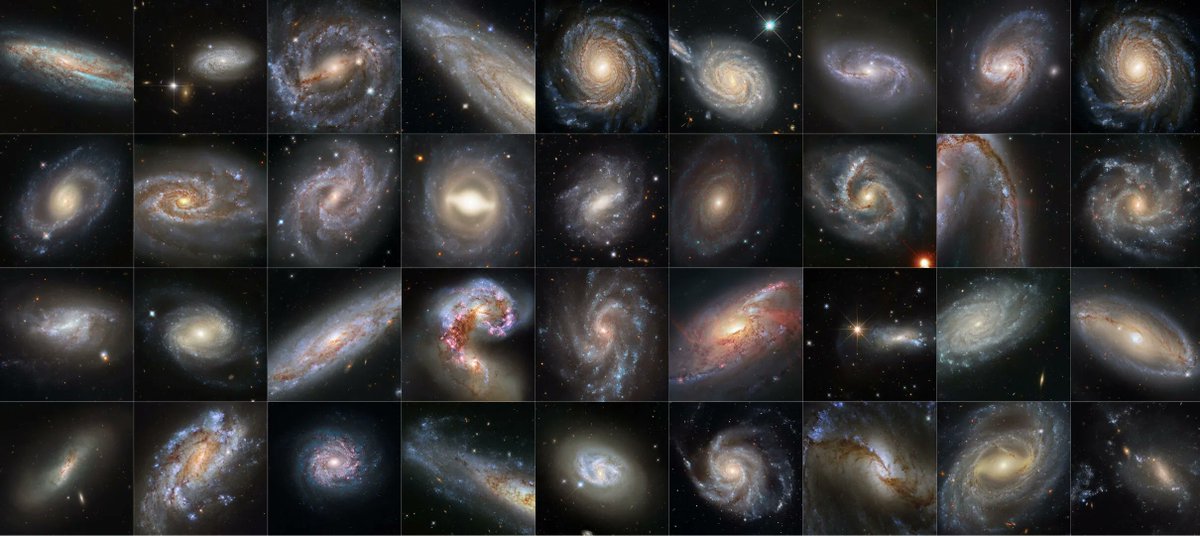

Continue reading “Astronomers Chart the Influence of Dark Matter and Dark Energy on the Universe by Measuring Over 1,500 Supernovae”Supernovae Were Discovered in all These Galaxies

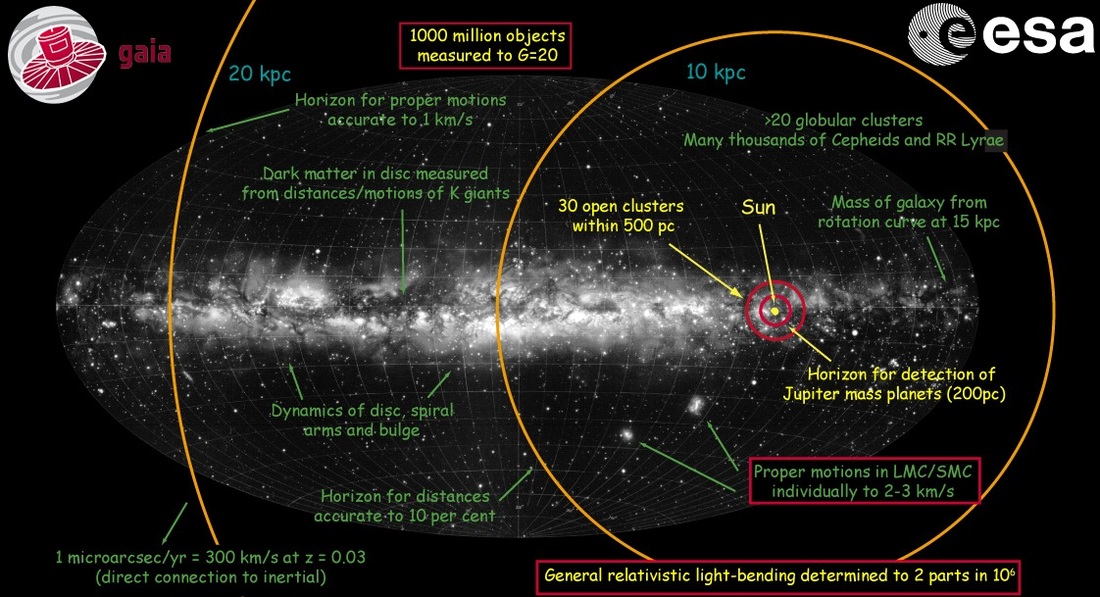

The Hubble space telescope has provided some of the most spectacular astronomical pictures ever taken. Some of them have even been used to confirm the value of another Hubble – the constant that determines the speed of expansion of the Universe. Now, in what Nobel laureate Adam Reiss calls Hubble’s “magnum opus,” scientists have released a series of spectacular spiral galaxies that have helped pinpoint that expansion constant – and it’s not what they expected.

Continue reading “Supernovae Were Discovered in all These Galaxies”Does a “Mirror World of Particles” Explain the Crisis in Cosmology?

The idea of a mirror universe is a common trope in science fiction. A world similar to ours where we might find our evil doppelganger or a version of us who actually asked out our high school crush. But the concept of a mirror universe has been often studied in theoretical cosmology, and as a new study shows, it might help us solve problems with the cosmological constant.

Continue reading “Does a “Mirror World of Particles” Explain the Crisis in Cosmology?”Hubble is Fully Operational Once Again

In the history of space exploration, a handful of missions have set new records for ruggedness and longevity. On Mars, the undisputed champion is the Opportunity rover, which was slated to run for 90 days but remained in operation for 15 years instead! In orbit around Mars, that honor goes to the 2001 Mars Odyssey, which is still operational 20 years after it arrived around the Red Planet.

In deep space, the title for the longest-running mission goes to the Voyager 1 probe, which has spent the past 44 years exploring the Solar System and what lies beyond. But in Earth orbit, the longevity prize goes to the Hubble Space Telescope (HST), which is once again fully operational after experiencing technical issues. With this latest restoration of operations, Hubble is well on its way to completing 32 years of service.

Continue reading “Hubble is Fully Operational Once Again”There’s a Problem With Hubble, and NASA Hasn’t Been Able to fix it yet

For over thirty years, the Hubble Space Telescope has been in continuous operation in Low Earth Orbit (LEO) and revealing never-before-seen aspects of the Universe. In addition to capturing breathtaking images of our Solar System and discovering extrasolar planets, Hubble also probed the deepest reaches of time and space, causing astrophysicists to revise many of their previously-held theories about the cosmos.

Unfortunately, Hubble may finally be reaching the end of its lifespan. In recent weeks, NASA identified a problem with the telescope’s payload computer which suddenly stopped working. This caused Hubble and all of its scientific instruments to go into safe mode and shut down. After many days of tests and checks, technicians at the NASA Goddard Space Flight Center have yet to identify the root of the problem and get Hubble back online.

Continue reading “There’s a Problem With Hubble, and NASA Hasn’t Been Able to fix it yet”Is the Hubble constant not…Constant?

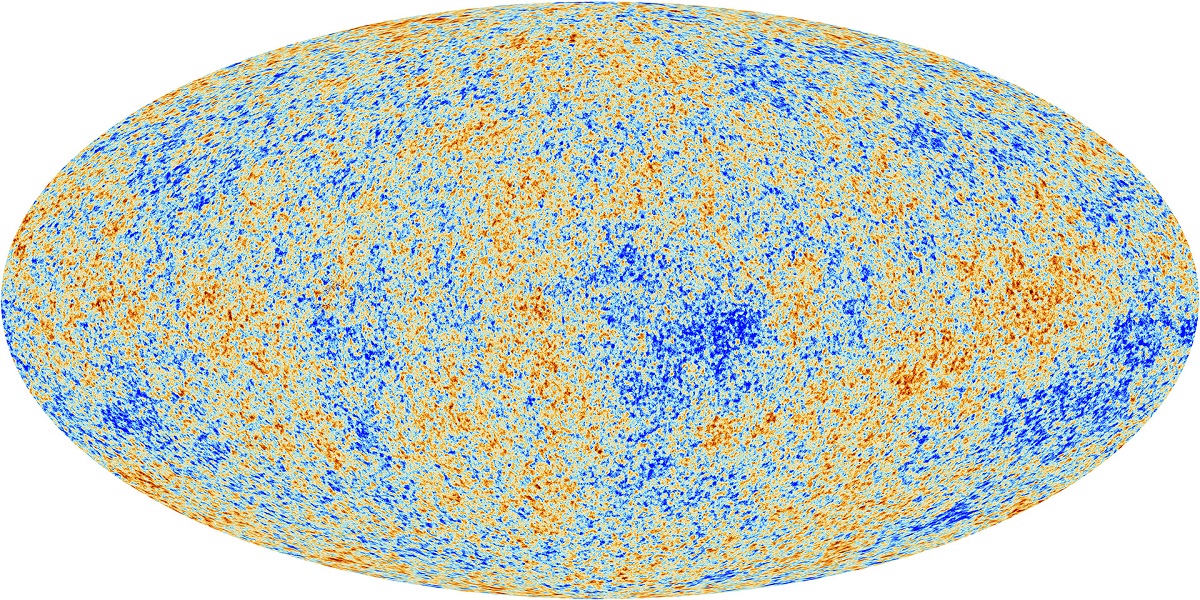

Cosmologists have been struggling to understand an apparent tension in their measurements of the present-day expansion rate of the universe, known as the Hubble constant. Observations of the early cosmos – mostly the cosmic microwave background – point to a significantly lower Hubble constant than the value obtained through observations of the late universe, primarily from supernovae. A team of astronomers have dug into the data to find that one possible way to relieve this tension is to allow for the Hubble constant to paradoxically evolve with time. This result could point to either new physics…or just a misunderstanding of the data.

“The point is that there seems to be a tension between the larger values for late universe observations and lower values for early universe observation,” said Enrico Rinaldi, a research fellow in the University of Michigan Department of Physics and coauthor on the study. “The question we asked in this paper is: What if the Hubble constant is not constant? What if it actually changes?”

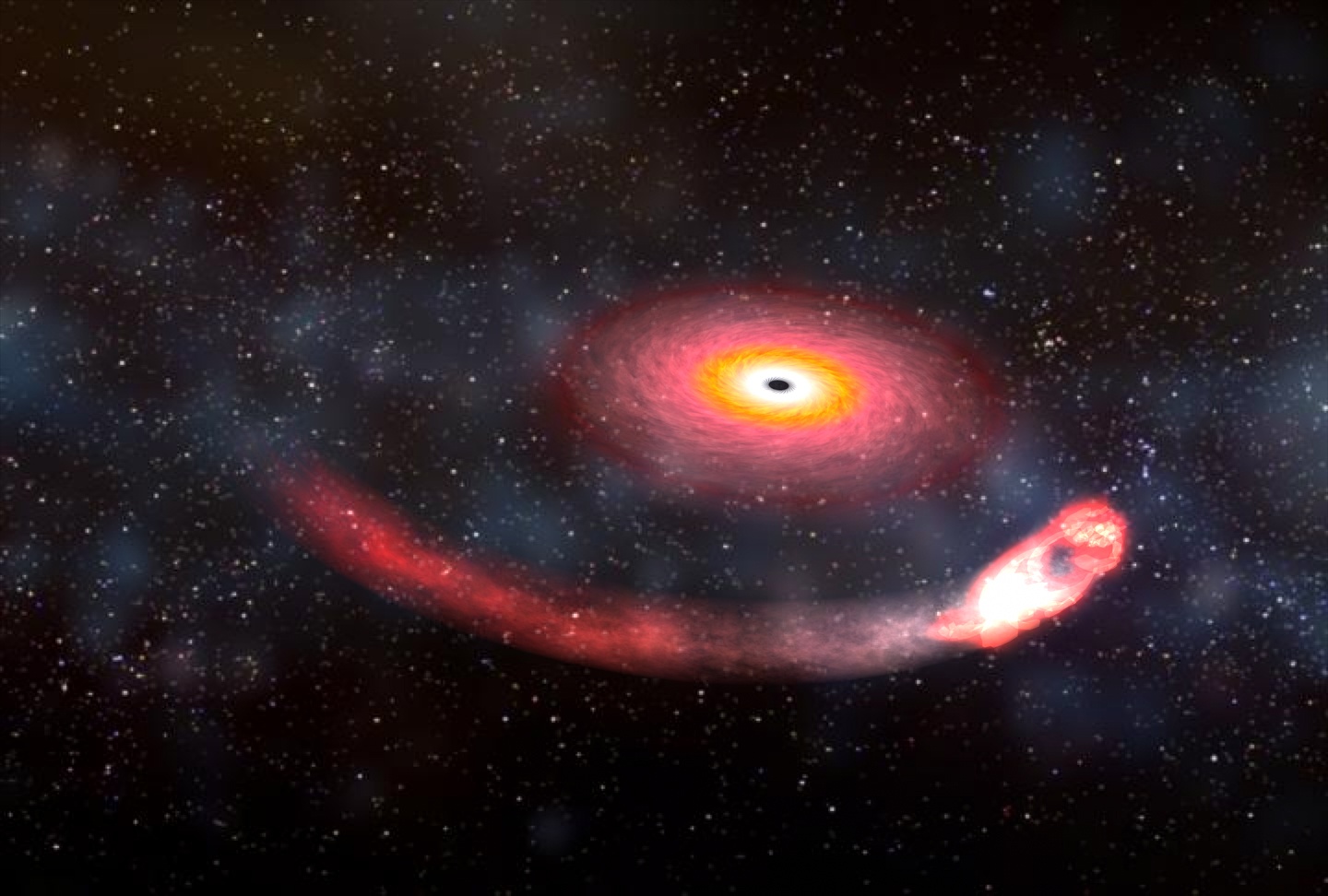

Continue reading “Is the Hubble constant not…Constant?”Black Hole-Neutron Star Collisions Could Finally Settle the Different Measurements Over the Expansion Rate of the Universe

If you’ve been following developments in astronomy over the last few years, you may have heard about the so-called “crisis in cosmology,” which has astronomers wondering whether there might be something wrong with our current understanding of the Universe. This crisis revolves around the rate at which the Universe expands: measurements of the expansion rate in the present Universe don’t line up with measurements of the expansion rate during the early Universe. With no indication for why these measurements might disagree, astronomers are at a loss to explain the disparity.

The first step in solving this mystery is to try out new methods of measuring the expansion rate. In a paper published last week, researchers at University College London (UCL) suggested that we might be able to create a new, independent measure of the expansion rate of the Universe by observing black hole-neutron star collisions.

Continue reading “Black Hole-Neutron Star Collisions Could Finally Settle the Different Measurements Over the Expansion Rate of the Universe”Nancy Grace Roman Telescope is Getting an Upgraded new Infrared Filter

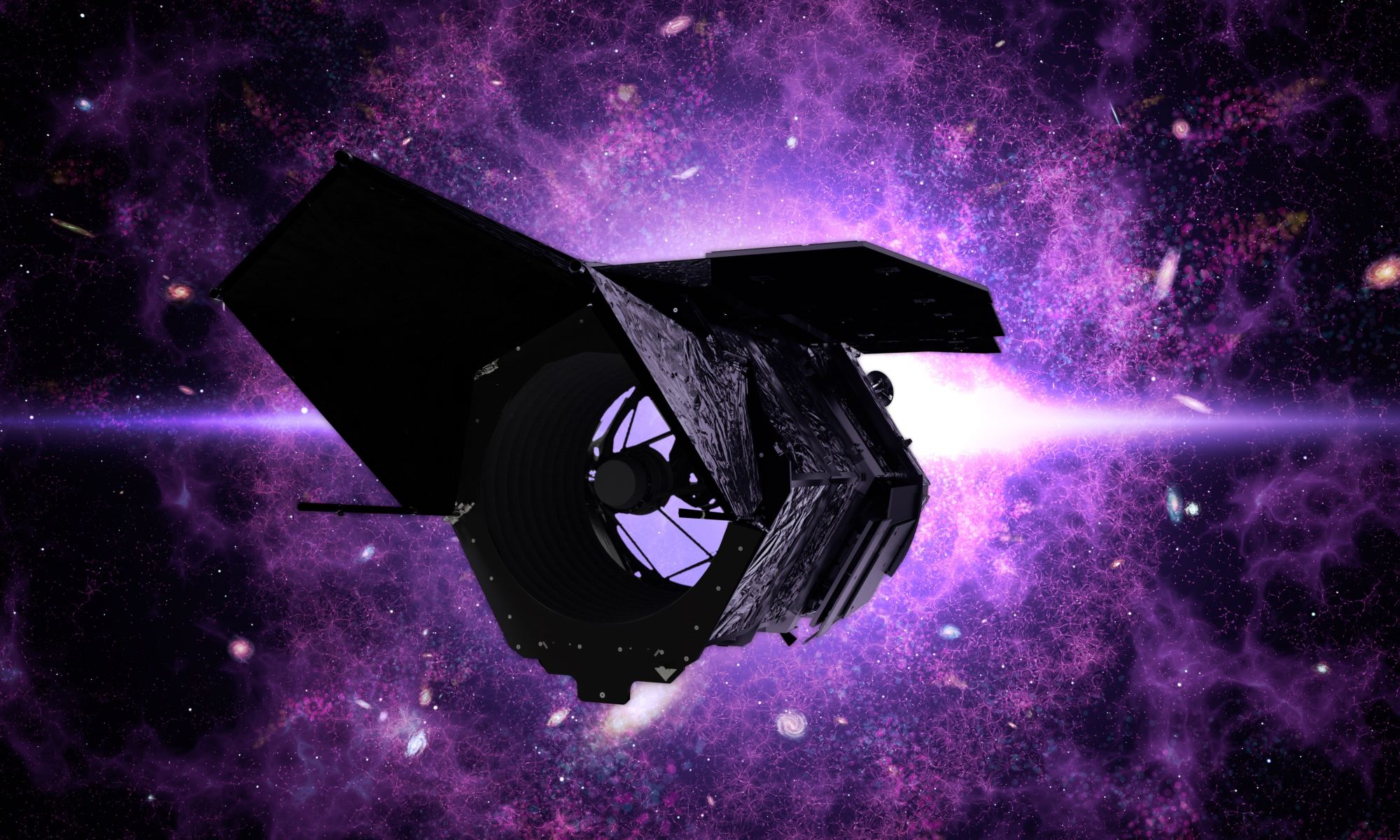

In 2025, the Nancy Grace Roman space telescope will launch to space. Named in honor of NASA’s first chief astronomer (and the “Mother of Hubble“), the Roman telescope will be the most advanced and powerful observatory ever deployed. With a camera as sensitive as its predecessors, and next-generation surveying capabilities, Roman will have the power of “One-Hundred Hubbles.”

In order to meet its scientific objectives and explore some of the greatest mysteries of the cosmos, Roman will be fitted with a number of infrared filters. But with the decision to add a new near-infrared filter, Roman will exceed its original design and be able to explore 20% of the infrared Universe. This opens the door for exciting new research and discoveries, from the edge of the Solar System to the farthest reaches of space.

Continue reading “Nancy Grace Roman Telescope is Getting an Upgraded new Infrared Filter”New Observations Agree That the Universe is 13.77 Billion Years old

The oldest light in the universe is that of the cosmic microwave background (CMB). This light was formed when the dense matter at the beginning of the universe finally cooled enough to become transparent. It has traveled for billions of years to reach us, stretched from a bright orange glow to cool, invisible microwaves. Naturally, it is an excellent source for understanding the history and expansion of the cosmos.

Continue reading “New Observations Agree That the Universe is 13.77 Billion Years old”Astronomers Improve Their Distance Scale for the Universe. Unfortunately, it Doesn't Resolve the Crisis in Cosmology

Measuring the expansion of the universe is hard. For one thing, because the universe is expanding, the scale of your distance measurements affects the scale of the expansion. And since light from distant galaxies takes time to reach us, you can’t measure what the universe is, but rather what it was. Then there is the challenge of the cosmic distance ladder.

Continue reading “Astronomers Improve Their Distance Scale for the Universe. Unfortunately, it Doesn't Resolve the Crisis in Cosmology”