Sometimes, it seems like habitable worlds can pop up almost anywhere in the universe. A recent paper from a team of citizen scientists led by researchers at the Flatiron Institute might have found an excellent candidate to look for one – on a moon orbiting a mini-Neptune orbiting a star that is also orbited by another star.

Continue reading “A Mini-Neptune in the Habitable Zone in a Binary Star System”How Animal Movements Help Us Study the Planet

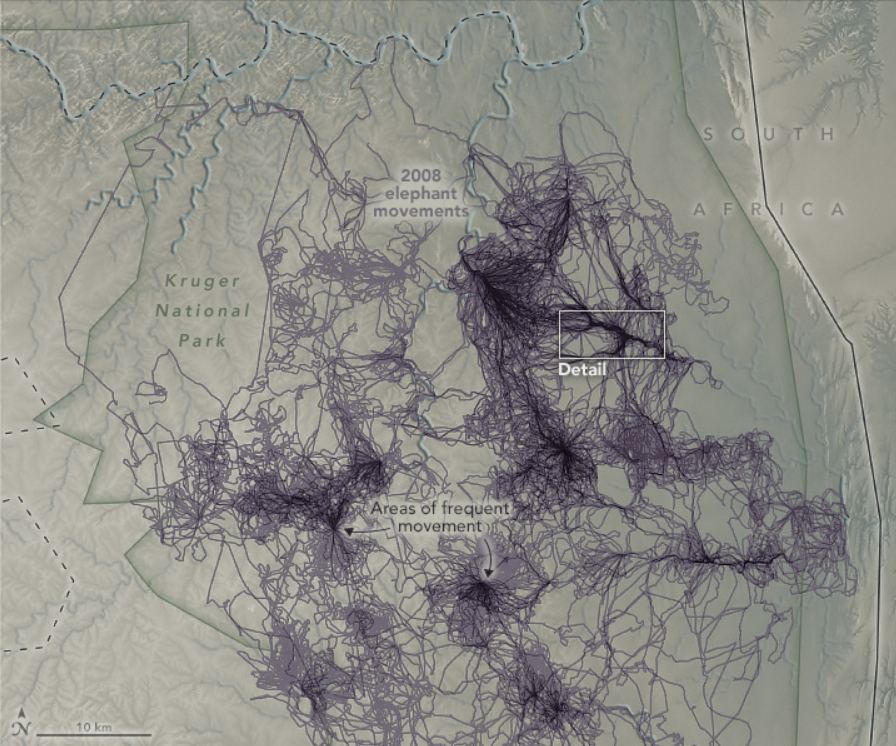

Scientists have been underutilizing a key resource we can use to help us understand Earth: animals. Our fellow Earthlings have a much different, and usually much more direct, relationship with the Earth. They move around the planet in ways and to places we don’t.

What can their movements tell us?

Continue reading “How Animal Movements Help Us Study the Planet”Amateur Astronomers Found Planets Crashing Into Each Other

Astronomy is one of the sciences where amateurs make regular contributions. Over the years, members of the public have made exciting discoveries and meaningful contributions to the scientific process, either through direct observing, citizen science projects, or through combing through open data from the various space missions.

Recently, amateur astronomer Arttu Sainio saw a conversation on X (Twitter) where researchers were discussing the strange behavior of a dimming sun-like star. Intrigued, Arttu decided to look at the data on this star, called Asassn-21qj, on his own. Looking at archival data from NASA’s NEOWISE mission, Sainio was surprised to find that the star had dimmed before, with an unexpected brightening in infrared light two years before the optical dimming event. So, he joined the discussion on social media and shared his finding – which led to more amateurs joining the research, which lead to an incredible discovery.

Continue reading “Amateur Astronomers Found Planets Crashing Into Each Other”NASA’s Exoplanet Watch Wants Your Help Studying Planets Around Other Stars

It’s no secret that the study of extrasolar planets has exploded since the turn of the century. Whereas astronomers knew less than a dozen exoplanets twenty years ago, thousands of candidates are available for study today. In fact, as of January 13th, 2023, a total of 5,241 planets have been confirmed in 3,916 star systems, with another 9,169 candidates awaiting confirmation. While opportunities for exoplanet research have grown exponentially, so too has the arduous task of sorting through the massive amounts of data involved.

Hence why astronomers, universities, research institutes, and space agencies have come to rely on citizen scientists in recent years. With the help of online resources, data-sharing, and networking, skilled amateurs can lend their time, energy, and resources to the hunt for planets beyond our Solar System. In recognition of their importance, NASA has launched Exoplanet Watch, a citizen science project sponsored by NASA’s Universe of Learning. This project lets regular people learn about exoplanets and get involved in the discovery and characterization process.

Continue reading “NASA’s Exoplanet Watch Wants Your Help Studying Planets Around Other Stars”The Oort Cloud Could Have More Rock Than Previously Believed

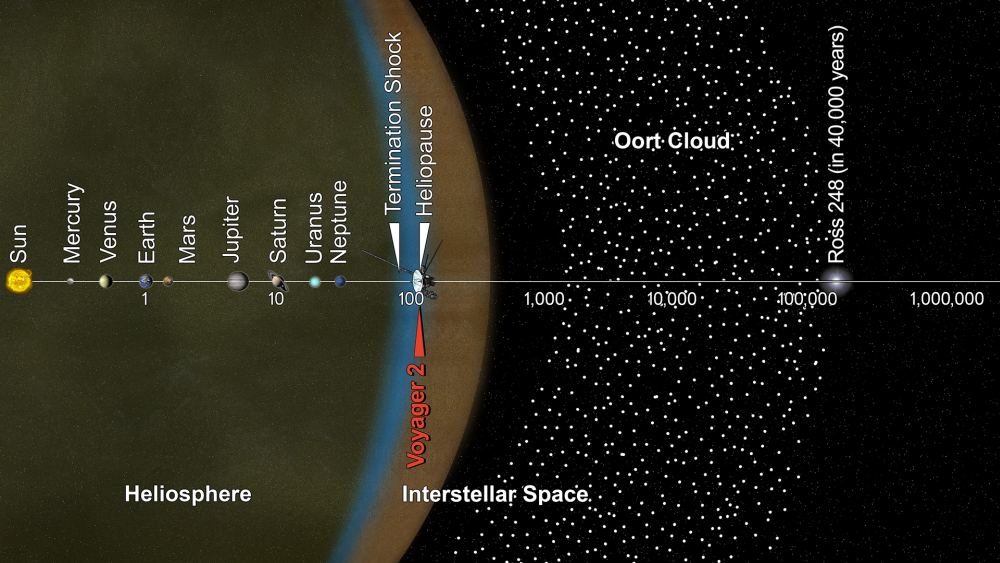

The Oort Cloud is a collection of icy objects in the furthest reaches of the Solar System. It contains the most distant objects in the Solar System, and instead of orbiting on a plane like the planets or forming a ring like the Kuiper Belt, it’s a vast spherical cloud centred on the Sun. It’s where comets originate, and beyond it is interstellar space.

At least that’s what scientists think; nobody’s ever seen it.

Continue reading “The Oort Cloud Could Have More Rock Than Previously Believed”NASA Provides a Timelapse Movie Showing How the Universe Changed Over 12 Years

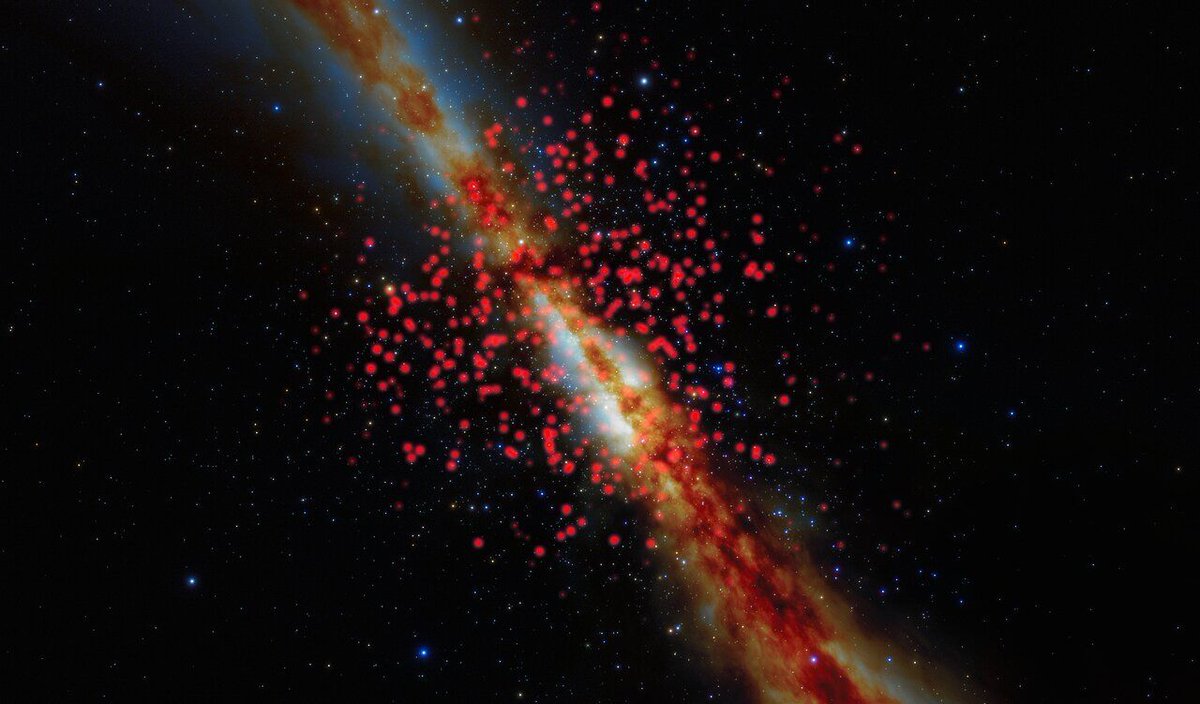

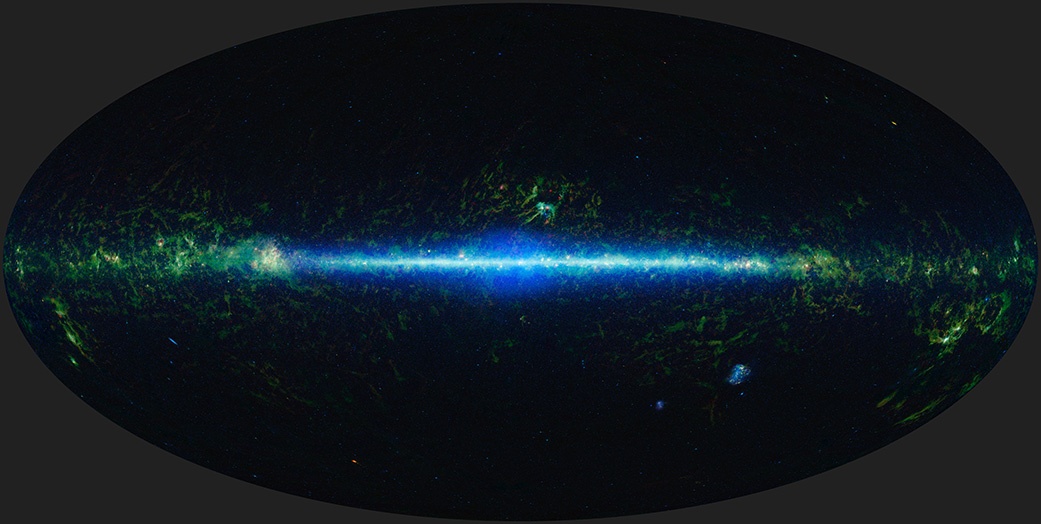

The Universe is over 13 billion years old, so a 12-year slice of that time might seem uneventful. But a timelapse movie from NASA shows how much can change in just over a decade. Stars pulse, asteroids follow their trajectories, and distant black holes flare as they pull gas and dust toward themselves.

Continue reading “NASA Provides a Timelapse Movie Showing How the Universe Changed Over 12 Years”Even Citizen Scientists are Getting Time on JWST

Over the years, members of the public have regularly made exciting discoveries and meaningful contributions to the scientific process through citizen science projects. These citizen scientists sometimes mine large datasets for cosmic treasures, uncovering unknown objects such as Hanny’s Voorwerp, or other times bring an unusual phenomenon to scientists’ attention, such as the discovery of the new aurora-like spectacle called STEVE. Whatever the project, the advent of citizen science projects has changed the nature of scientific engagement between the public and the scientific community.

Now, unusual brown dwarf stars discovered by citizen scientists will be observed by the James Webb Space Telescope, with the hopes of learning more about these rare objects. Excitingly, one of the citizen scientists has been named as a co-investigator on a winning Webb proposal.

Continue reading “Even Citizen Scientists are Getting Time on JWST”Ganymede Casts a Long Shadow Across the Surface of Jupiter

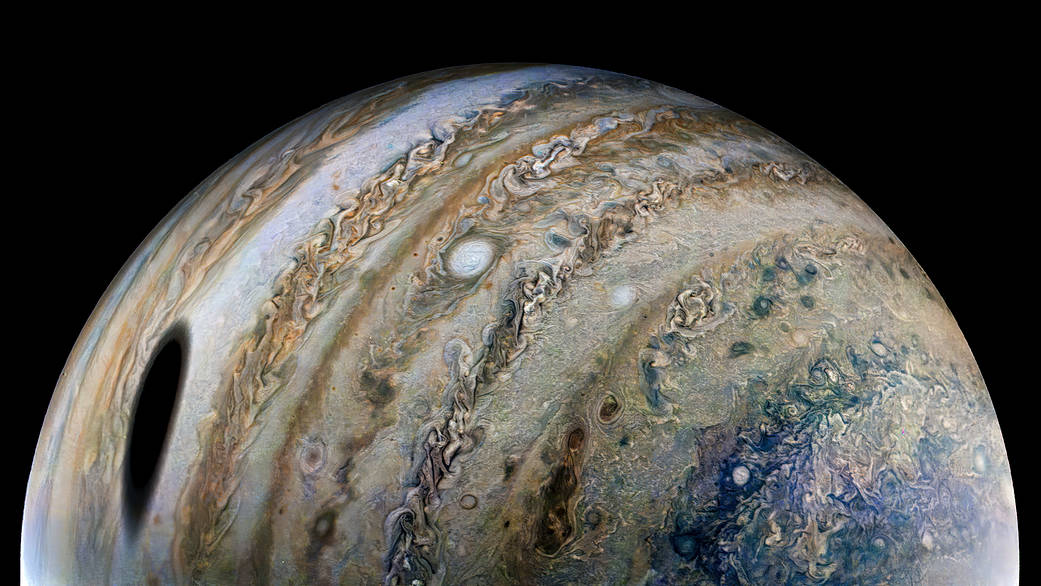

What is that large dark smudge on Jupiter’s side? It may remind you of a certain scene from the sci-fi film “2010: The Year We Make Contact,” where a growing black spot appears in Jupiter’s atmosphere.

But this is a real photo, and the dark spot is just an elongated shadow of Ganymede, Jupiter’s largest moon. Just like when Earth’s Moon crosses between our planet and the Sun creating an eclipse for lucky Earthlings, when Jupiter’s moons cross between the gas giant and the Sun, they create shadows too.

Continue reading “Ganymede Casts a Long Shadow Across the Surface of Jupiter”Citizen Scientists Discover a new Feature in Star Formation: “Yellowballs”

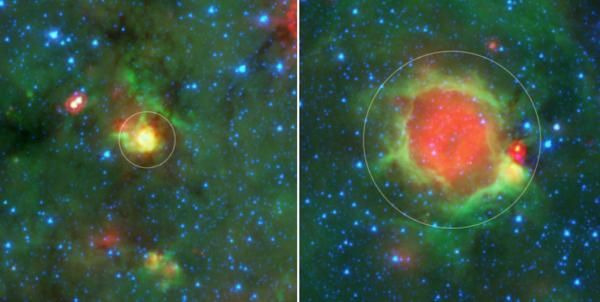

AI is often touted as being particularly good at finding patterns amongst reams of data. But humans also are extremely good at pattern recognition, especially when it comes to visual images. Citizen science efforts around the globe leverage this fact, and recent results released from the Milky Way Project on Zooinverse show how effective it can be. The project’s volunteer team identified 6,176 “yellowballs”, which are a stage that star clusters go through during their early years. That discovery helps scientists better understand the formation of these clusters and how they eventually grow into individualized stars.

Continue reading “Citizen Scientists Discover a new Feature in Star Formation: “Yellowballs””We Now Have a 3D Map of The 525 Closest Brown Dwarfs

Zooniverse brings out the best of the internet – it leverages the skills of average people to perform scientific feats that would be impossible otherwise. One of the tasks that a Zooniverse project called Backyard Worlds: Planet 9 has been working on has now resulted in a paper cataloguing 525 brown dwarfs, including 38 never before documented ones.

Continue reading “We Now Have a 3D Map of The 525 Closest Brown Dwarfs”