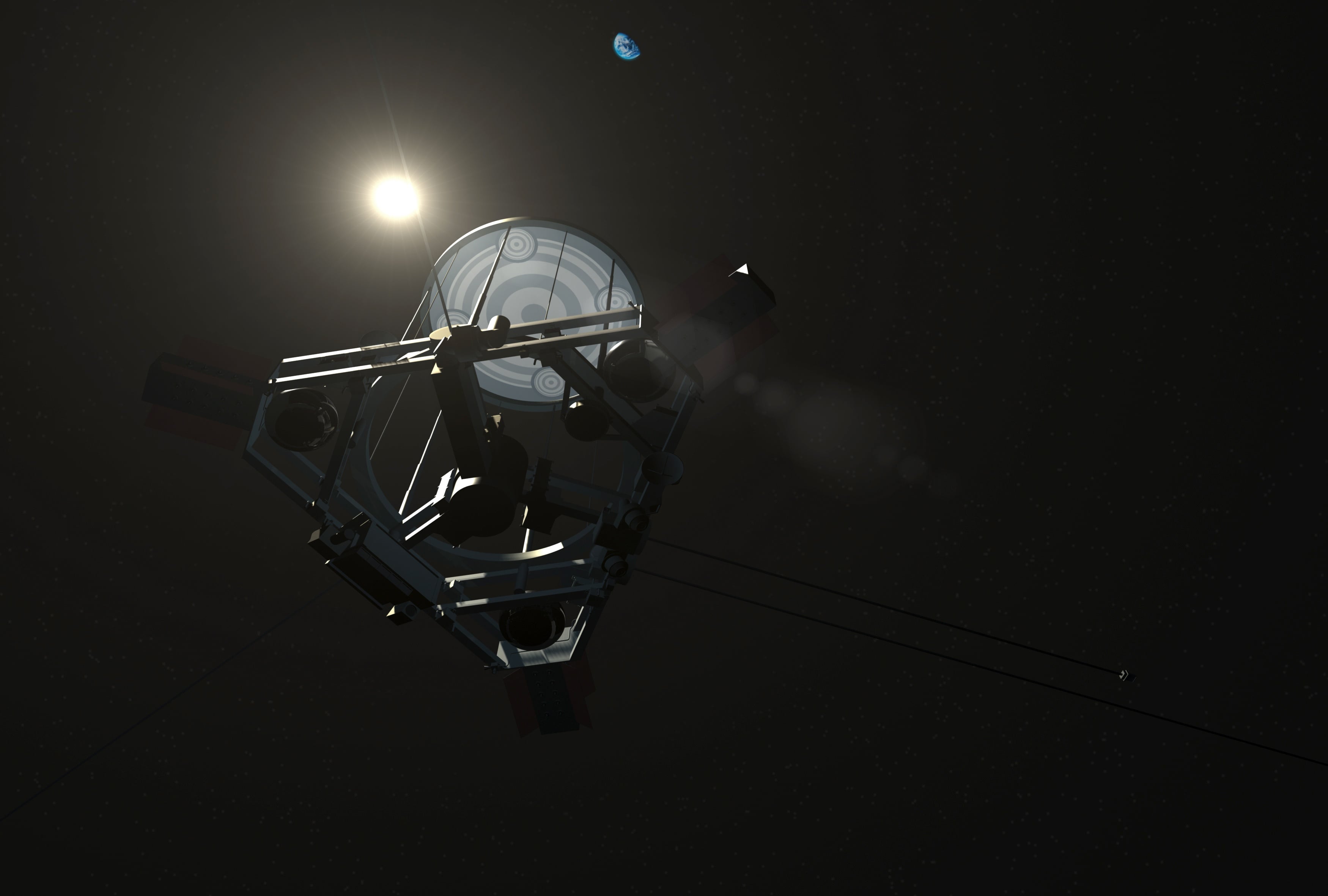

In 1948/49, famed computer scientist, engineer, and physicist John von Neumann introduced the world to his revolutionary idea for a species of self-replicating robots (aka. "Universal Assemblers"). In time, researchers involved in the Search for Extraterrestrial Intelligence (SETI) adopted this idea, stating that self-replicating probes would be an effective way to explore the cosmos and that an advanced species may be doing this already. Among SETI researchers, "Von Neumann probes" (as they've come to be known) are considered a viable indication of technologically advanced species (technosignature).

Given the rate of progress with robotics, it's likely just a matter of time before humanity can deploy Von Neumann probes, and the range of applications is endless. But what about the safety implications? In a recent study by Carleton University Professor Alex Ellery explores the potential harm that Von Neumann Probes could have. In particular, Ellery considers the prospect of runaway population growth (aka. the "grey goo problem") and how a series of biologically-inspired controls that impose a cap on their replication cycles would prevent that.

Professor Ellery is the Canada Research Chair in Space Robotics & Space Technology in the Mechanical & Aerospace Engineering Department at Carleton University, Ottawa. The paper that describes his findings, titled " Curbing the fruitfulness of self-replicating machines," recently appeared in the *International Journal of Astrobiology*. For the sake of this study, Ellery investigated how interstellar Von Neumann probes could explore the Milky Way galaxy safely by imposing a limit on their ability to reproduce.

Universal Assemblers in Space

The topic of Von Neumann probes and their applications (and implications) for space exploration and SETI is one that Ellery is well-versed. While Von Neumann was interested in self-replicating machines as a means of advancing the frontiers of robotics and manufacturing, the concept was quickly seized upon by researchers engaged in the Search for Extraterrestrial Intelligence (SETI). During the 1980s, astronomer Frank Tipler used the concept of these machines to advance the argument that intelligent life did not exist beyond Earth.

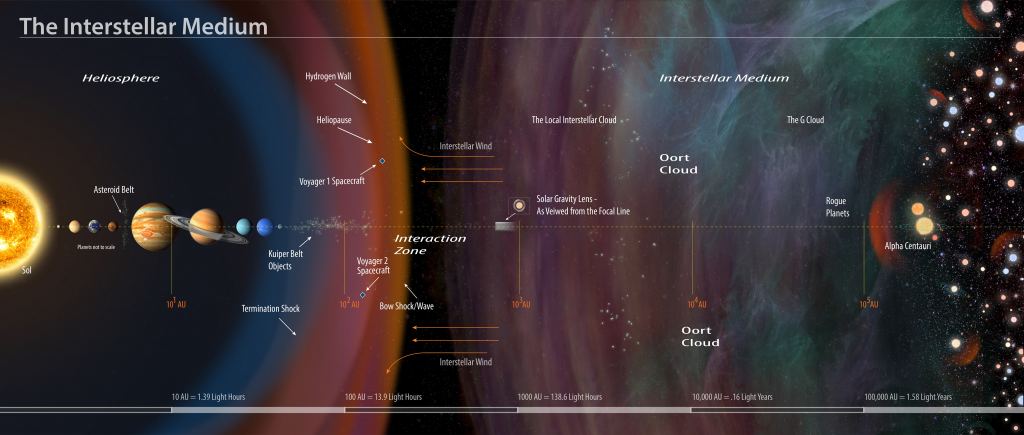

This argument remains central to the Fermi Paradox, which essentially states that the assumed likelihood of intelligent life in the Universe stands in contrast to the absence of evidence for it. According to Tipler, an advanced intelligence that preceded humanity would have likely created Von Neumann probes long ago to explore and colonize the galaxy and have had ample time to do it. As he stated in his first paper on the subject, titled " Extraterrestrial Intelligent Beings do not Exist " (released in 1979):

"I shall assume that such a species will eventually develop a self-replicating universal constructor with intelligence comparable to the human level - such a machine should be developed within a century, according to the experts - and such a machine combined with present-day rocket technology would make it possible to explore and/or colonize the Galaxy in less than 300 million years."

Considering that humanity sees no evidence of self-replicating machines in our galaxy (and hasn't been visited by any), we must assume that no intelligent civilizations exist, says Tipler. These conclusions prompted a spirited response from Carl Sagan and William Newman in a paper titled " The Solipsists Approach to Extraterrestrial Intelligence " (aka. "Sagan's Response."), where Sagan famously stated that "the absence of evidence is not the evidence of absence." This debate and Tipler's paper profoundly influenced Ellery, who was studying in the UK at the time. As he recounted to Universe Today via email:

"When I was at the university of Sussex doing the masters in astronomy, I came across the Von Neumann probe concept. And that completely captivated my imagination and I decided then to shift to engineering and space robotics. Basically, I was trying to figure out how I could start to work on Von Neumann probes, eventually. After my PhD, I spent a couple years in working in a hospital as a medical physicist and in industry for a bit, and then came back to academia."

Traditionally, Ellery's work has been focused on space robotics, primarily with planetary rovers for Martian exploration and other aspects like servicing manipulators and space debris removal. Through this, Ellery has also maintained a close connection to the astrobiology community, which is dedicated to searching for extraterrestrial life on Mars and beyond. While the concept of self-replicating machines was always in the back of his mind, it was only a few years ago that research programs emerged that allowed him to work on it in an official capacity.

As Ellery explained, his recent study considers self-replicating robots as a means of building infrastructure on the Moon (which would facilitate human exploration) but has applications far beyond that:

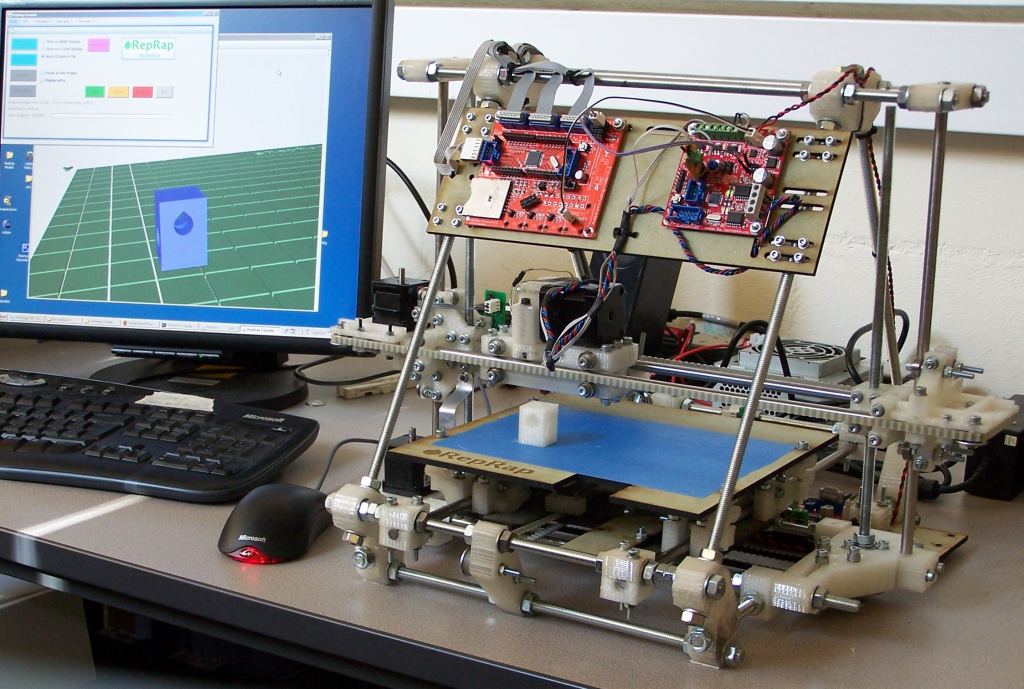

"I started moving away from the rovers and more into the In-Situ Resource Utilization [ISRU] side of things. You know, mining the moon and that sort of sort of stuff. I was actually doing some work in 3D printing as a mechanism to, uh, leverage resources from the Moon. So one of the things we've done is we've 3d printed an electric motor. This is a major step towards realizing 3d printing, robotic machines on the moon using Luna resources.

"The primary motivation in the back of my mind was I'm doing this to try and build a self replicating machine.And so in a way, it's come full circle to my original interest in using self replicating machines to explore the cosmos, and its implications for the SETI program, for the Fermi Paradox, and so on, which originally was what motivated down me down this road."

As with all things concerning technological advancement and humanity's future in space, there are undeniable issues that need to be discussed beforehand. When it comes to self-replicating machines, there is the question of whether they might grow beyond our control someday. If perchance, some malfunctioned and began consuming everything in their environment (and multiplying exponentially in the process), the results would be disastrous. This speculative prospect is known as the "grey goo" scenario.

The Problem with "Grey Goo"

The term "grey goo" (or "gray goo") was coined by famed engineer and nanotechnology pioneer K. Eric Drexler. In his 1986 book, Engines of Creation, he poses a thought experiment where molecular self-replicating robots could escape from a laboratory and multiply ad infinitum, potentially leading to an ecology catastrophe. As Drexler described it:

"[E]arly assembler-based replicators could beat the most advanced modern organisms. 'Plants' with 'leaves' no more efficient than today's solar cells could out-compete real plants, crowding the biosphere with an inedible foliage. Tough, omnivorous 'bacteria' could out-compete real bacteria: they could spread like blowing pollen, replicate swiftly, and reduce the biosphere to dust in a matter of days. Dangerous replicators could easily be too tough, small, and rapidly spreading to stop - at least if we made no preparation. We have trouble enough controlling viruses and fruit flies."

While Drexler dismissed the likelihood of doomsday scenarios and later stated that he wished he'd "never used the term 'gray goo,'" he nevertheless felt the scenario needed to be taken seriously. As he stated in his book, this thought experiment made it clear that humanity "cannot afford certain kinds of accidents with replicating assemblers." The theory that such machines could run amok and become hostile to life has been explored as a possible resolution to the Fermi Paradox (known as the " Berserker Hypothesis ").

Ellery echoes Drexler's statement by explaining how the "grey goo" scenario is more about perception than fact. Nevertheless, he agrees that it is a prospect that warrants discussion and action to ensure that worst-case scenarios can be avoided. As he put it:

"It's an idea more than anything else. I'm not convinced of how realistic it is. The problem is, it's the commonest question, 'what do you about uncontrolled self-replication?' Because people imagine a self-replicating machine to be like a virus, and it will spread. Which it could do, but only so far as there are resources available.

"[T]he thing that we have to be cognizant of is that self-replicate machines sound scary. We have to be aware that some people have knowledge and they can temper that knowledge, and so can appreciate rational argument. Other people are more focused on the fear, rather than the probability. But the idea behind this is being able to curb the self-replication process is to try and show that we are working on that problem - that we're not just going straight into this self-replicate machine without thinking about the potential consequences."

Therefore, says Ellery, it is incumbent upon us to develop preventative measures to ensure that self-replicating Von Neumann probes will behave themselves before we begin experimenting. To this end, he recommends a solution inspired by cellular biology.

Telomeres for Robots?

The key to Ellery's approach is the Hayflick Limit, a concept in biology that states that a normal human cell can only replicate and divide forty to sixty times before it breaks down due to programmed cell death (apoptosis). This limit is imposed by telomeres, the protective caps at the ends of the DNA strands inside the nucleus of chromosomes (animal and plant cells) that progressively shorten during cellular replication. In recent years, telomere research has advanced considerably thanks to growing interest in anti-aging treatments.

In much the same way, Ellery's proposal consists of machines with "memory modules" similar to chromosomes, which contain genetic memory in the form of DNA sequences. These modules are comprised of volumetric arrays of magnetic core memory cells, each of which is programmed with "genetic instructions" in the form of zeros and ones. During the replication process, the "parent machine" will copy these instructions into every "offspring machine," and the telomeres will shorten with each replication.

In this case, the telomeres constitute a physical linear tail of blank memory cells that feed into the original to-be-read memory array. The number of these cells corresponds to the maximum number of replications, thereby imposing an artificial Hayflick Limit. As he detailed the process:

"When you start to copy [an array], you start at a certain point, and that's defined. And then, the copier goes from one to the other and copies each one with a blank magnetic core. The point is that when you copy it, you start on position one with your copier, and then you move on to the next one and start copying at the next point. You don't copy at the point to which you sat at.

"So what happens is that as you copy, you lose that first generation, and then the second generation you are missing the first position, and then you start at the second position, and you start copying. As long as you are not copying the first position - where you've positioned your initial point, your initial copier - it only copies on the next square. Then you're chopping out information.

"Now the, in order to retain the information in that block, you can add a tail of units. And so basically it copies each unit along the tail, and it's basically not copying the first one that lands on. So every generation is basically you get, you lose one cell of memory. So that first tale doesn't actually code for anything. It's just basically a telomere. So you just basically plan how many generations you need, [which] defines how long your tail is."

Potential Applications

In his study, Ellery explores several applications for self-replicating robots, starting with the industrialization of the Moon. In each case, the robots rely on additive manufacturing (3D printing) and in-situ resource utilization (ISRU) to manufacture the infrastructure necessary for human habitation, s well as more copies of themselves. This would allow for a long-term human presence on the Moon, consistent with the NASA, ESA, and the Chinese and Russian space agencies' goals for the coming decades.

In particular, NASA has stressed that the main objective of the Artemis Program is to create a "sustained program of lunar exploration." This presents one of the main advantages of self-replicating machines, which is their ability to develop Lunar and other celestial resources sustainably. Said Ellery:

"When we go to the Moon, we have two choices. At the moment, the interest is in going to the moon and mining water, and then separating the hydrogen and oxygen, then burning it as propellant. To me, that is wasteful. That's not sustainable because you're taking a scarce resource and then you're burning it, much like we've done on our Earth. Why are we taking all our bad habits with us?

"The other approach, of course, is to look at resources and work within the limits of those resources. So, if you look at minerals. Minerals are widespread: common rock-forming minerals on the Moon and asteroids. Basically, we would be sending these probes to the Moon and other locations in the Solar System, wherever we're intending to [build bases]. They're tectonically dead, they're just hunks of rock. So I see nothing wrong with utilizing those resources."

Beyond the Moon, Mars, and asteroid mining, there's the potential for creating space habitats (such as O'Neill Cylinders) at the Earth-Sun Lagrange Points and even terraforming operations. Last but not least (by any stretch of the imagination) is the potential for interstellar exploration and the possible settlement of exoplanets! Once again, Ellery stresses how this will have implications where the whole SETI vs. METI (Messaging Extraterrestrial Intelligence) is concerned:

"These Von Neumann probes potentially act as Scouts. They're scouting vehicles to investigate target locations before humans go there. So, of course, it makes no sense to send out a World Ship from here to another stellar system that you've never been to. You have no idea what's there. You want to send robotic machines out there first to give you information. So you understand what the implications are, what you need to take with you, what resources you need, and what resources you don't need.

"Self-replicating probes are the mechanism for doing any kind of interstellar space exploration, whichever way you want to look at it. You will always use these to try and scout beforehand and to send information, if only to locate other planets that are Earthlike, which are no use, or which ones might be of use (that you could adapt to). And perhaps most important would be to find out if there is intelligent life and does it present a threat. You send a Von Neumann probe to a planet, and the civilization doesn't know where it comes from. You send signals, they know exactly where the signal is coming from."

These and other considerations are paramount given how humanity finds itself on the verge of another "Space Age." With multiple plans to "return to the Moon," explore Mars, establish permanent based and infrastructure, mine asteroids, and commercialize Low Earth Orbit (LEO), there are countless safety, legal, logistical, and ethical considerations that need to be worked out in advance. This is also essential when looking beyond the next few decades, where technological revolutions and the possibility of First Contact present certain existential risks.

In short, if we plan to deploy self-replicating probes to pave the way for human settlement, we need to ensure that they will not run amok and begin consuming everything in sight. This is especially true if these probes are to be used as scouts, exploring the galaxy and maybe acting as our ambassadors to extraterrestrial civilizations. Making "programmed cell death" a part of their design is an elegant solution that ensures our creations will be tempered by mortality. We can only hope that if an extraterrestrial civilization is already exploring the cosmos with Von Neumann probes, they took similar precautions!

Further Reading: Cambridge University

Universe Today

Universe Today