Astronomers have a dark energy problem. On the one hand, we've known for years that the universe is not just expanding, but accelerating. There seems to be a dark energy that drives cosmic expansion. On the other hand, when we measure cosmic expansion in different ways we get values that don't quite agree. Some methods cluster around a higher value for dark energy, while other methods cluster around a lower one. On the gripping hand, something will need to give if we are to solve this mystery.

The obvious answer is that some of the cosmic expansion measurements must be wrong. The difficulty with this idea is that these measurements are very robust, and have been tested multiple times. They also are relatively similar. For years the uncertainties were large enough that they overlapped. It's only in the last few years as they've gotten more precise that we've seen the problem. While some have argued dark energy should be eliminated, it's more likely that we just need some minor corrections to our model.

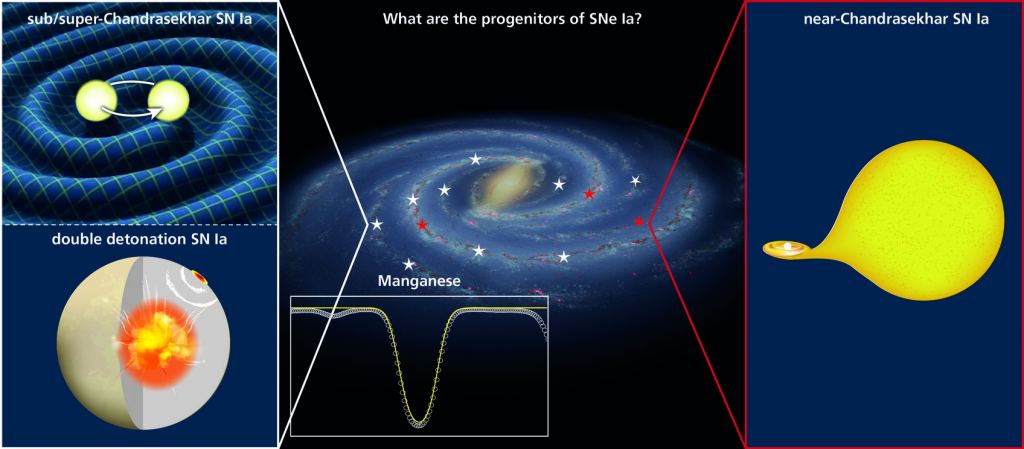

A possible correction could be to refine our understanding of so-called standard candles. One way to measure cosmic expansion is to use objects of a known brightness to measure galactic distances. For large galactic distances this is typically done by Type Ia supernovae. These can occur when a white dwarf is closely orbiting another star. Over time, the white dwarf can capture material from its companion, until it reaches a critical mass and explodes as a supernova. Since the critical mass is always the same, these supernovae always explode with the same brightness.

But a new study of astrochemistry suggests this isn't always true. Different types of supernovae are identified by spectral lines in their light. Type I supernovae don't show any signs of hydrogen in their spectrum, while Type II supernovae do. The latter occurs when the core of a large star collapses at the end of its life. Type Ia are Type I supernovae that also have a spectral line of ionized silicon. The silicon is produced when the mostly-carbon white dwarf explodes.

In this new study, the team was studying cosmic manganese, and how it has formed over time. Manganese is produced in both types of supernovae, as well as other elements such as iron. But each type produces a different ratio of manganese to iron. When the team measured this ratio over cosmic time, they found it stayed quite constant. This is surprising since the known rates of Type I and Type II supernovae suggest the ratio of manganese should increase over time.

One way this discrepancy could be resolved is if Type Ia supernovae are more variable than we think. The usual model suggests Type Ia white dwarfs explode at or near their critical mass limit, but other models suggest they could undergo staged detonations. These could be caused when an initial instability creates a shock wave in the star that triggers an explosion before reaching critical mass. Or the collision of two white dwarfs could create a multi-stage explosion that looks similar to the standard Type Ia supernova.

For the cosmic manganese/iron ratio to remain constant over time, about three-quarters of Type Ia supernovae would need to be of these other varieties. If that's true, then our standard candle isn't so standard after all, and measurements of dark energy using this method could be wrong.

While the variance of supernovae is one possibility, this study doesn't prove that supernova measurements of dark energy are wrong. We'll need more studies to see whether this suggested variation is correct.

Reference: Eitner, P., et al. " Observational constraints on the origin of the elements. III. The chemical evolution of manganese and iron."

Universe Today

Universe Today