Perhaps the greatest and most frustrating mystery in cosmology is the Hubble tension problem. Put simply, all the observational evidence we have points to a Universe that began in a hot, dense state, and then expanded at an ever-increasing rate to become the Universe we see today. Every measurement of that expansion agrees with this, but where they don't agree is on what that rate exactly is. We can measure expansion in lots of different ways, and while they are in the same general ballpark, their uncertainties are so small now that they don't overlap. There is no value for the Hubble parameter that falls within the uncertainty of all measurements, hence the problem.

Of course, most of the results depend on a long chain of observational results. When we measure cosmic expansion using distant supernovae, for example, the result depends on the derived distances of these supernovae as found through the cosmic distance ladder, where ever greater distances are determined based on the distance of closer things. So, from parallax we measure nearby stellar distances, use that to calibrate a type of variable star known as Cepheid variables, use Cepheids to measure galactic distances in our local group, use that to standardize the brightness of Type Ia supernovae, and finally use those supernovae to measure the most distant galaxies.

Each step in the cosmic distance ladder has a certain amount of uncertainty and this carries on to the next level. So, if one kind of distance measure happens to be really off, that would throw off our measure of cosmic expansion for any method that depends upon the distance ladder. As a result, astronomers have started to take a very close look at various ladder steps, looking for an error that would solve the tension problem. Much of that has focused on Cepheid variable stars.

Cepheid variables are a type of variable star that varies in brightness at a rate proportional to its overall luminosity. This period-luminosity relation was first discovered by Henrietta Leavitt in the 1800s, and has been central to cosmology ever since. If you measure the period of a Cepheid, you know its actual brightness and compare it to its apparent brightness to determine its distance. Cepheids were used by Edwin Hubble to discover cosmic expansion in the first place, and the method has proven quite reliable.

But over the years we found that Leavitt's period-luminosity relation is a bit more subtle than originally thought. For example, we now know that the period of a Cepheid is slightly different based upon its metallicity and other factors. Perhaps there's some variation in the data we've missed.

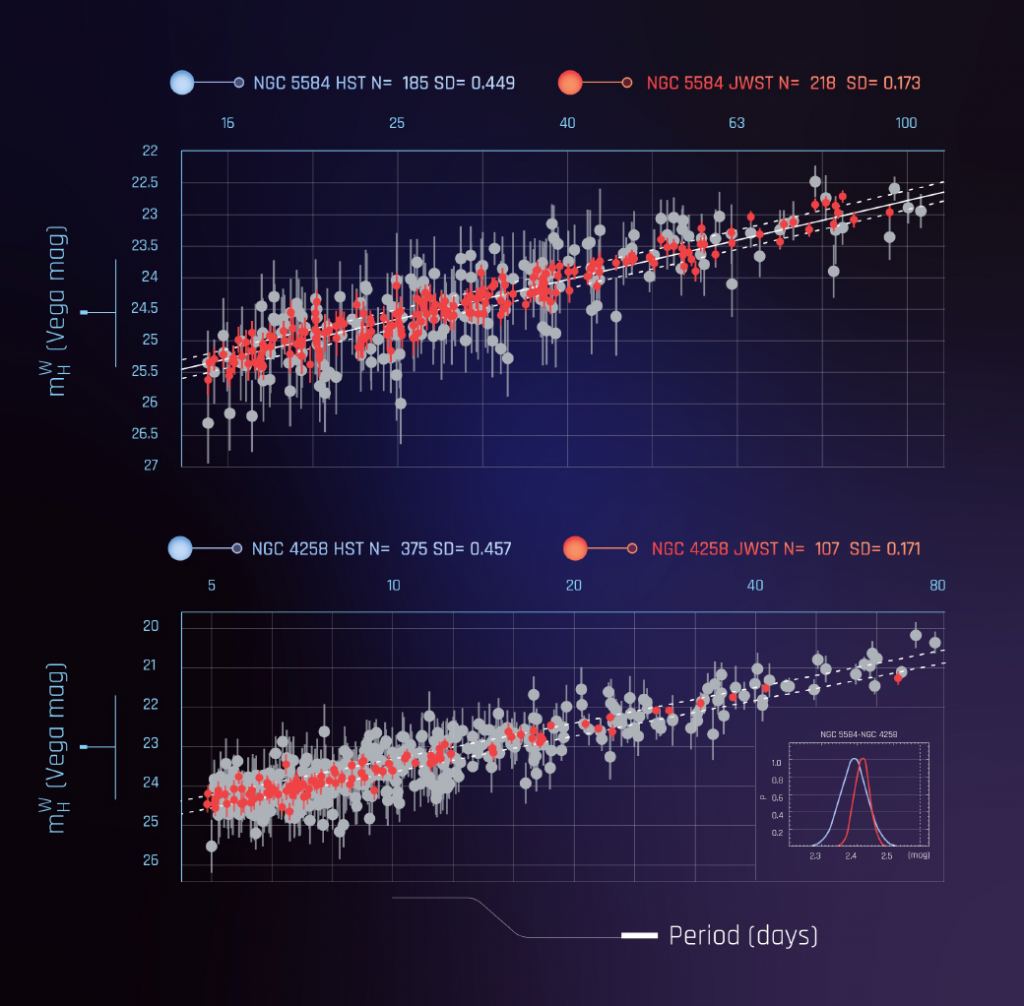

A few years ago Cepheid observations from Hubble were used to see if adjustments in the period-luminosity relation could account for the Hubble tension, but the results didn't look promising. Now a study using JWST observations has been released. One advantage of JWST over Hubble is that Webb observes Cepheids in infrared light, which penetrates interstellar dust more readily. The Webb observations are also better at addressing the issue of "crowding," where light from the Cepheid can be overwhelmed a bit by the light of stars in the same cluster. So these latest results are the most accurate Cepheid observations we have. In this new study, the team looked at more than a thousand Cepheid variables and was able to pinpoint the distance relation for Cepheids with extreme precision. From this, they proved that Cepheid variable error can't account for the Hubble tension.

The Cepheid solution to the tension problem is ruled out at a statistical level of 8-sigma. In science, a 5-sigma result is considered "certain," so the Hubble tension is very, very real. Whether it's spacetime structure, dark energy, or something we haven't yet discovered, there is something we simply don't understand about cosmic expansion.

Reference: Riess, Adam G., et al. " JWST Observations Reject Unrecognized Crowding of Cepheid Photometry as an Explanation for the Hubble Tension at 8 sigma Confidence." *arXiv preprint* arXiv:2401.04773 (2024).

Universe Today

Universe Today