Our best understanding of the Universe is rooted in a cosmological model known as LCDM. The CDM stands for Cold Dark Matter, where most of the matter in the universe isn't stars and planets, but a strange form of matter that is dark and nearly invisible. The L, or Lambda, represents dark energy. It is the symbol used in the equations of general relativity to describe the Hubble parameter, or the rate of cosmic expansion. Although the LCDM model matches our observations incredibly well, it isn't perfect. And the more data we gather on the early Universe, the less perfect it seems to be.

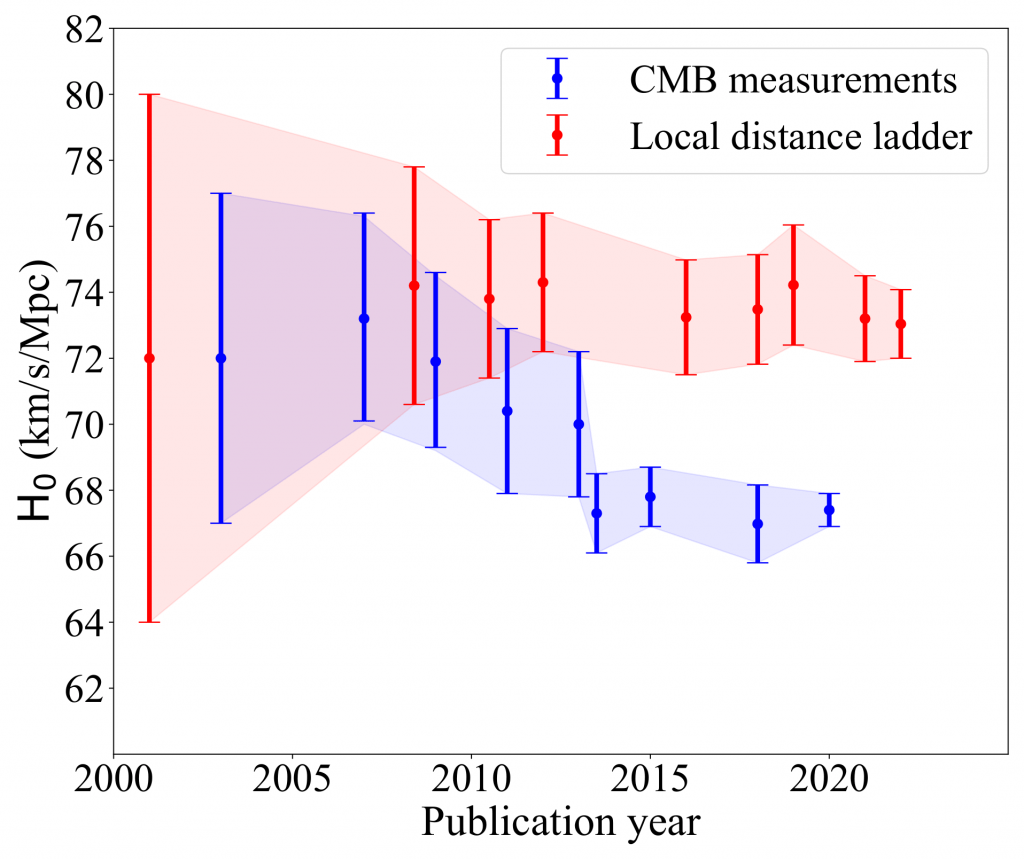

A central difficulty is the fact that increasingly our various measures of the Hubble parameter aren't lining up. For example, if we use fluctuations in the cosmic microwave background to calculate the parameter, we get a value of about 68 km/s per megaparsec. If we look at distant supernova to measure it, we get a value of around 73 km/s per megaparsec. In the past, the uncertainty of these values was large enough that they overlapped, but we've now measured them with such precision that they truly disagree. This is known as the Hubble Tension problem, and it's one of the deepest mysteries of cosmology at the moment.

Much of the effort to solve this mystery has focused on better understanding the nature of dark energy. In Einstein's early model, cosmic expansion is an inherent part of the structure of space and time. A cosmological constant that expands the Universe at a steady rate. But perhaps dark energy is an exotic scalar field, one that would allow a variable expansion rate or even an expansion that varies slightly depending on which direction you look. Maybe the rate was greater in the period of early galaxies, then slowed down, hence the different observations. We know so little about dark energy that there are lots of theoretical possibilities.

Perhaps tweaking dark energy will solve Hubble Tension, but Sunny Vagnozzi doesn't think so. In a recent article, he outlines seven reasons to suspect dark energy won't be enough to solve the problem. It's an alphabetical list of data that shows just how deep this cosmological mystery is.

Ages of Distant Objects

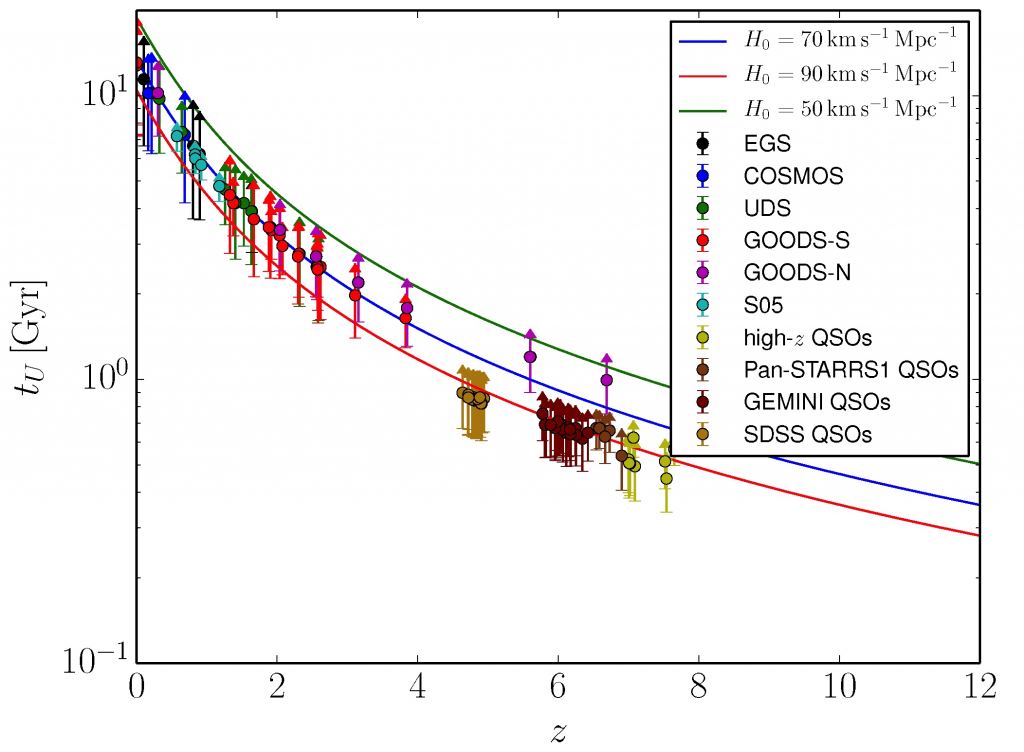

The idea behind this one is simple. If you know the age of a star or galaxy a billion light-years away, then you know the Universe must have been at least that old a billion years ago. If this age disagrees with LCDM, then LCDM must be wrong. For example, there are a few stars that appear to be older than the Universe, which big bang skeptics often point to as disproving the big bang. This doesn't work because the age of these stars is uncertain enough to be younger than the Universe. But you can expand upon the idea as a cosmological test. Determine the age of thousands of stars at various distances, then use statistics to gauge a minimum cosmological age at different epochs, and from that calculate a minimum Hubble parameter.

Several studies have looked at this, drawing upon a range of sky surveys. Determining the age of stars and globular clusters is particularly difficult, so the resulting data is a bit fuzzy. While it's possible to fit the data to the range of Hubble parameters we have from direct measures, the age-distance data suggests the Universe is a bit older than the LCDM allows. In other words, IF the age data is truly accurate, there is a discrepancy between cosmic age and stellar ages. That's a big IF, and this is far from conclusive, but it's worth exploring further.

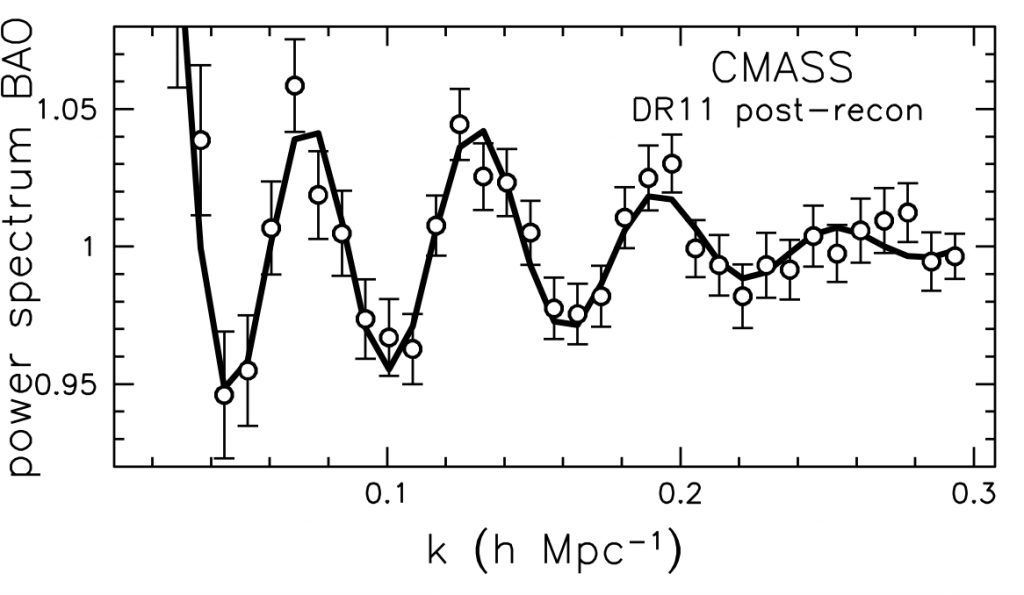

Baryon Acoustic Oscillation

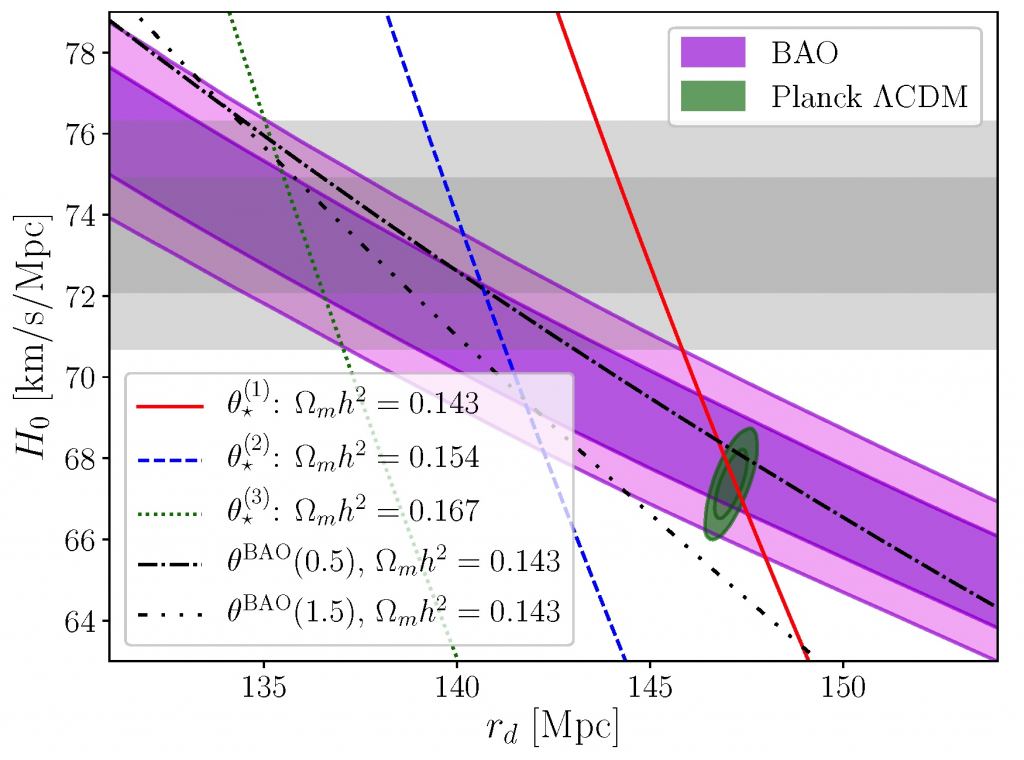

Regular matter is made of baryons and leptons. The protons and neutrons in an atom are baryons, and the electrons are leptons. So Baryonic matter is the usual type of matter we see every day, as opposed to dark matter. Baryon Acoustic Oscillation (BAO) refers to the fluctuations of matter density in the early Universe. Back when the Universe was in a hot dense state, these fluctuations rippled through the cosmos like sound waves. As the Universe expanded, the more dense regions formed the seeds for galaxies and galactic clusters. The scale of those clusters is driven by cosmic expansion. So by looking at BAO across the Universe, we can study the evolution of dark energy over time.

What's nice about BAO is that it connects the distribution of galaxies we see today to the inflationary state of the Universe during the period of the cosmic microwave background (CMB). It's a way to compare the value of the early Hubble parameter with the more recent value. This is because early inflation put a limit on how far acoustic waves could propagate. The higher the rate of expansion back then, the smaller the acoustic range. It's known as the acoustic horizon, and it depends not only on the expansion rate but also on the density of matter at the time. When we compare BAO and CMB observations, they do agree, but only for a level of matter on the edge of observed limits. In other words, if we get a better measure of the density of matter in the Universe, we could have a CMB/BAO tension just as we currently have a Hubble Tension.

Cosmic Chronometers

Both the supernovae and cosmic microwave background measures of the Hubble parameter depend on a scaffold of interlocking models. The supernova measure depends on the cosmic distance ladder, where we use various observational models to determine ever greater distances. The CMB measure depends on the LCDM model, which has some uncertainty in its parameters such as matter density. Cosmic chronometers are observational measures of the Hubble parameter that aren't model dependent.

One of these measures uses astrophysical masers. Under certain conditions, hot matter in the accretion disk of a black hole can emit microwave laser light. Since this light has a very specific wavelength, any shift in that wavelength is due to the relative motion or cosmic expansion, so we can measure the expansion rate directly from the overall redshift of the maser, and we can measure the distance from the scale of the accretion disk. Neither of these require cosmological model assumptions.

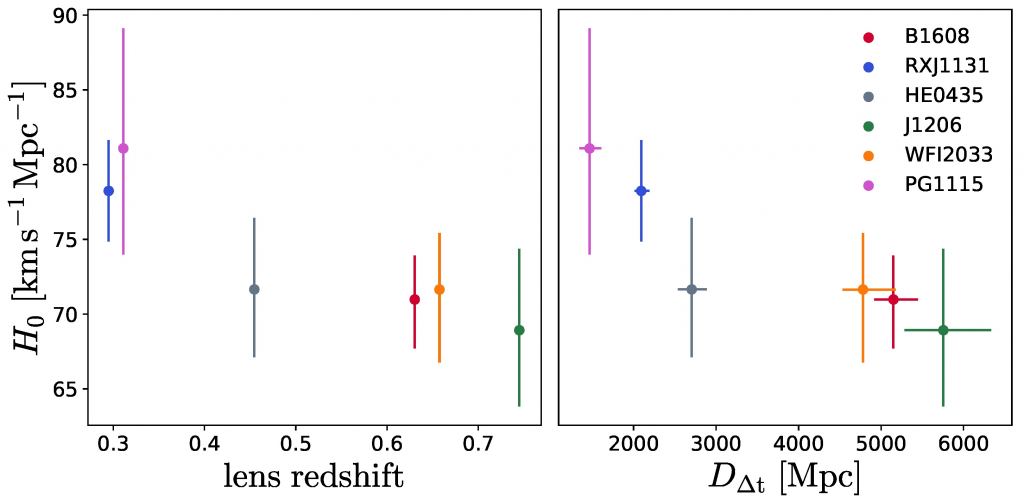

Another approach uses gravitational lensing. If a nearby galaxy happens to be between us and a distant supernova, the light from the supernova can be gravitationally lensed around the galaxy, creating multiple images of the supernova. Since the light of each image travels a different path, each image takes a different amount of time to reach us. When we are lucky we can see the supernova multiple times. By combining these observations we can get a direct measure of the Hubble parameter, again without any model assumptions.

The maser method gives a Hubble parameter of about 72 – 77 (km/s)/Mpc, while the gravitational lensing approach gives a value of about 63 - 70 (km/s)/Mpc. These results are tentative and fuzzy, but it looks as if even model-independent measures of the Hubble parameter won't eliminate the tension problem.

Descending Redshift

Within general relativity the Hubble parameter is constant. The Lambda is a cosmological constant, driving expansion at a steady pace. This means that the density of dark energy is uniform throughout time and space. Some exotic unknown energy might drive additional expansion, but in the simplest model, it should be constant. So the redshifts of distant galaxies should be directly proportional to distance. There may be some small variation in redshift due to the actual motion of galaxies through space, but overall there should be a simple redshift relation.

But there's some evidence that the Hubble parameter isn't constant. A survey of distant quasars gravitationally lensed by closer galaxies calculated the Hubble value at six different redshift distances. The uncertainties of these values are fairly large, but the results don't seem to cluster around a single value. Instead, the Hubble parameter for closer lensings seems higher than those of more distant lensings. The best fit puts the Hubble parameter at about 73 (km/s)/Mpc, but that assumes a constant value.

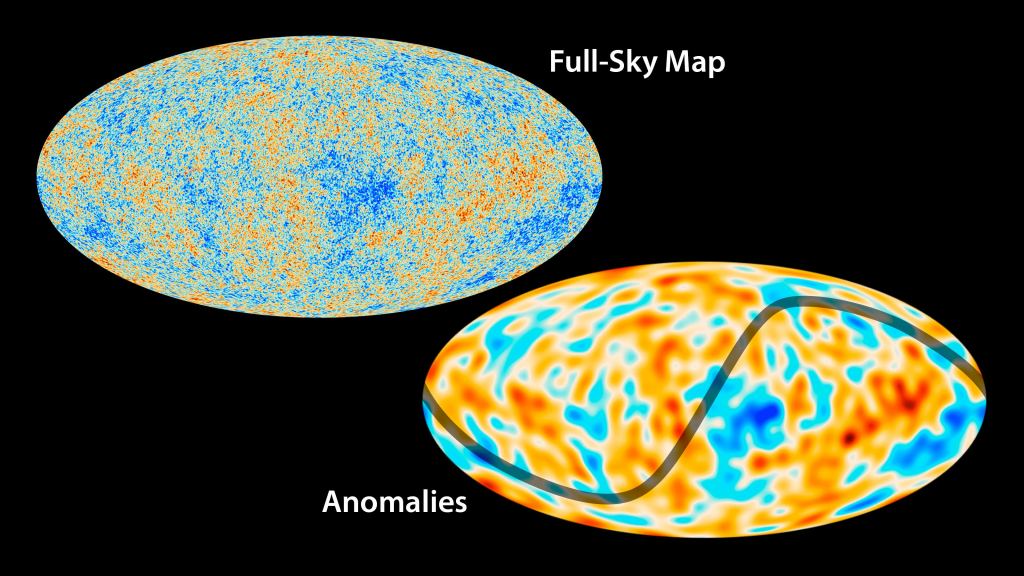

Early Integrated Sachs-Wolfe Effect

When we look at light from the cosmic microwave background, we don't have a perfectly clear view. The CMB light has to travel across billions of light-years to reach us, and that means it often has to pass through dense regions of galaxy clusters and the vast voids between galaxies. As it does so, the light can be red-shifted or blue-shifted by the gravitational variations of the clusters and voids. As a result, regions of the CMB can appear warmer or cooler than it actually is. This is known as the Integrated Sachs-Wolfe (ISW) effect.

When we look at fluctuations within the CMB, most of them are on a scale predicted by the LCDM model, but there are some larger scale fluctuations that are not, which we call anomalies. Most of these anomalies can be accounted for by the Integrated Sachs-Wolfe effect. How this pertains to cosmic inflation is that since most of the ISW happens in the early period of the Universe, it puts limits on how much you can tweak dark energy to address the tension problem. You can't simply shift the early expansion rate without also accounting for the CMB anomalies on some level.

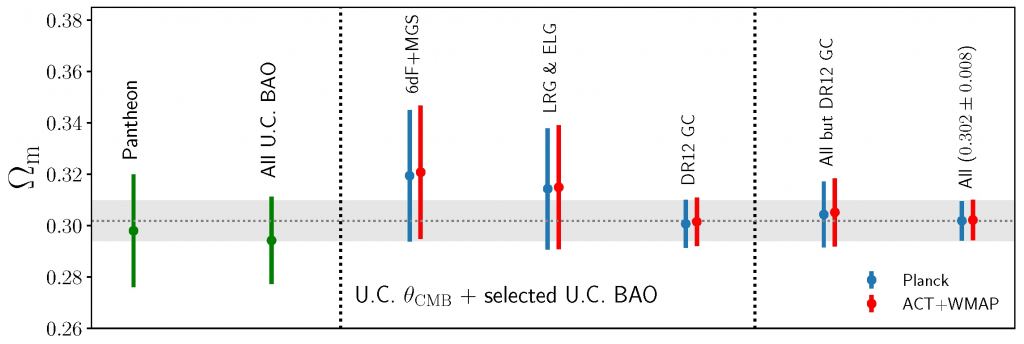

Fractional Matter Density Constraints

In general, our cosmological model depends on two parameters: the fraction of dark energy and the fraction of matter. Just as dark energy drives cosmic expansion, working to move galaxies away from each other, dark matter and regular matter work against cosmic expansion. We mostly see the effect of matter density through the clustering of galaxies, but the overall density of matter in the Universe also dampens the observed expansion rate.

The cosmic matter density can be determined by many of the same observational tests used to determine cosmic expansion. All of them are in general agreement that the matter density is about 30% of the total mass-energy of the Universe, but the early Universe observations trend a bit lower. Not a problem per se, but increasing the expansion rate of the early universe would tend to make this problem worse, not better.

Galaxy Power Spectrum

Power spectrum in this case is a bit of a misnomer. It doesn't have to do with the amount of energy a galaxy has, but rather the scale at which galaxies cluster. If you look at the distribution of galaxies across the entire Universe, you see small galaxy clusters, big galaxy clusters, and everything in between. At some scales clusters are more common and at others more rare. So one useful tool for astronomers is to create a "power spectrum" plotting the number of clusters at each scale.

The galaxy power spectrum depends upon both the matter and energy of the Universe. It's also affected by the initial hot dense state of the Big Bang, which we can see through the cosmic microwave background. Several galactic surveys have measured the galactic power spectrum, such as the Baryon Oscillation Spectroscopic Survey (BOSS). Generally, they point to a lower rate of cosmic expansion closer to those of the cosmic microwave background results.

So What Does All This Mean?

As is often said, it's complicated. One thing that should be emphasized is that none of these results in any way disprove the big bang. On the whole, our standard model of cosmology is on very solid ground. What it does show is that the Hubble Tension problem isn't the only one hovering at the edge of our understanding. There are lots of little mysteries, and they are all interconnected in non-trivial ways. Simply tweaking dark energy isn't likely to solve all of them. It will likely take a combination of adjustments all coming together. Or it might mean a radical new understanding of some basic physics.

We have come a tremendous way in our early understanding of the cosmos. We know vastly more than we did even a decade or two ago. But the power of science is rooted in not resting on our success. No matter how successful our models are, they are, in the end, never enough.

Reference: Vagnozzi, Sunny. " Seven Hints That Early-Time New Physics Alone Is Not Sufficient to Solve the Hubble Tension." Universe 9.9 (2023): 393.

Universe Today

Universe Today