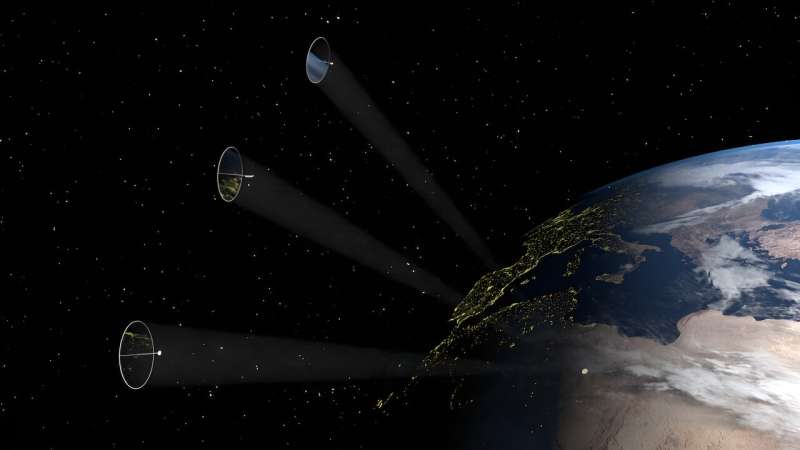

Solar power is a booming industry right now as we all strive to run our lives with minimum carbon footprint. Solar is a relatively easy way to get clean electricity but of course we are limited to the hours then Sun is above the horizon. Solar panels in space have been muted before but the costs and technology to transmit power to Earth is prohibitive. An alternative approach has been explored by a team of engineers who have been looking at the possibility of deploying giant reflectors into space.

Continue reading “Reflectors in Space Could Make Solar Power More Effective”We’ll be Building Self-Replicating Probes to Explore the Milky Way Sooner Than you Think. Why Haven’t ETIs?

The future can arrive in sudden bursts. What seems a long way off can suddenly jump into view, especially when technology is involved. That might be true of self-replicating machines. Will we combine 3D printing with in-situ resource utilization to build self-replicating space probes?

One aerospace engineer with expertise in space robotics thinks it could happen sooner rather than later. And that has implications for SETI.

Continue reading “We’ll be Building Self-Replicating Probes to Explore the Milky Way Sooner Than you Think. Why Haven’t ETIs?”Going 1 Million Miles per Hour With Advanced Propulsion

Advanced propulsion breakthroughs are near. Spacecraft have been stuck at slow chemical rocket speeds for years and weak ion drive for decades. However, speeds over one million miles per hour before 2050 are possible. There are surprising new innovations with technically feasible projects.

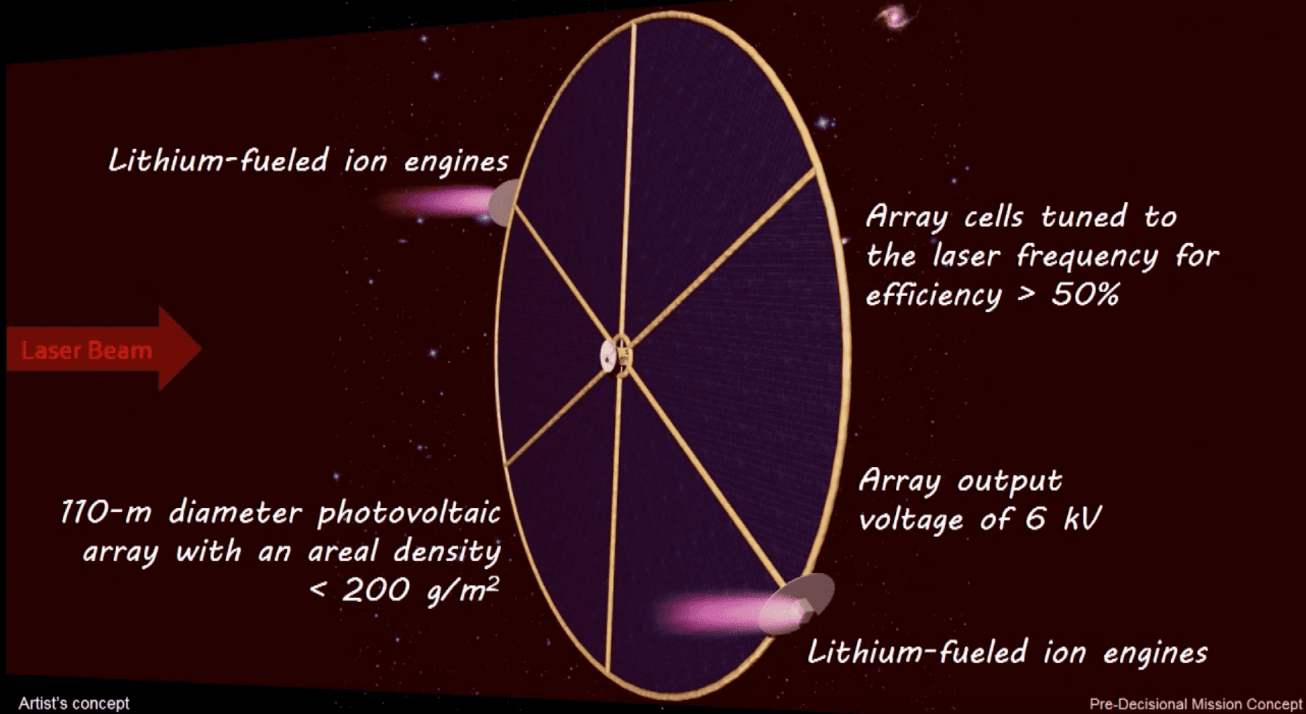

NASA Institute for Advanced Concepts (NIAC) is funding two high potential concepts. New ion drives could have ten times better in terms of ISP and power levels ten thousand times higher. Antimatter propulsion and multi-megawatt ion drives are being developed.

Continue reading “Going 1 Million Miles per Hour With Advanced Propulsion”

Astronomy Cast Ep. 331: Arthur C. Clarke’s Technologies

In our previous episode, we introduced Arthur C. Clarke, the amazing man and science fiction writer. Today we’ll be discussing his legacy and ideas on space exploration. You’ll be amazed to hear how many of the ideas we take for granted were invented or just accurately predicted by Arthur C. Clarke.

Continue reading “Astronomy Cast Ep. 331: Arthur C. Clarke’s Technologies”

100 Year Starship Project Has a New Leader

[/caption]

You may have heard by now about the 100 Year Starship project, a new research initiative to develop the technology required to send a manned mission to another star. The project is jointly sponsored by NASA and the Defense Advanced Research Projects Agency (DARPA). It will take that long just to make such a trip feasible, hence the name. So we’re a long ways off from naming any crew members or a starship captain, but the project itself does have a new leader, a former astronaut.

Mae Jemison, a former Space Shuttle astronaut, has been appointed the position by DARPA. She was also the first African-American woman to go into space, in 1992. Her own non-profit educational organization, the Dorothy Jemison Foundation for Excellence (in honor of her late mother) was chosen to work with DARPA, receiving a $500,000 contract. That funding is just seed money, to start the process of developing the framework needed for such an ambitious undertaking. The focus at this point is to create a foundation that can last long enough to research the technology required, rather than the actual government-funded building of the spacecraft.

As stated by the proposal, the goal is to “develop a viable and sustainable non-governmental organization for persistent, long-term, private-sector investment into the myriad of disciplines needed to make long-distance space travel viable.”

From the project’s mission statement:

The 100 Year Starship™ (100YSS™) study is an effort seeded by DARPA to develop a viable and sustainable model for persistent, long-term, private-sector investment into the myriad of disciplines needed to make long-distance space travel practicable and feasible.

The genesis of this study is to foster a rebirth of a sense of wonder among students, academia, industry, researchers and the general population to consider “why not” and to encourage them to tackle whole new classes of research and development related to all the issues surrounding long duration, long distance spaceflight.

DARPA contends that the useful, unanticipated consequences of such research will have benefit to the Department of Defense and to NASA, as well as the private and commercial sector.

This endeavor will require an understanding of questions such as: how do organizations evolve and maintain focus and momentum for 100 years or more; what models have supported long-term technology development; what resources and financial structures have initiated and sustained prior settlements of “new worlds?”

With today’s technology, it would take about 100,000 years to reach just the nearest star, Alpha Centauri. That time would hopefully be reduced significantly with the development of new, faster propulsion methods.

The dream of travelling to the stars may still be a long ways off in the future before becoming reality, but we are getting closer. Ad astra!

More information about the 100 Year Starship project is here.

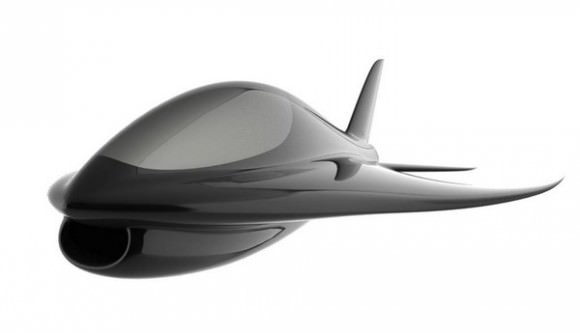

What Will Airplanes of the Future Look Like?

[/caption]

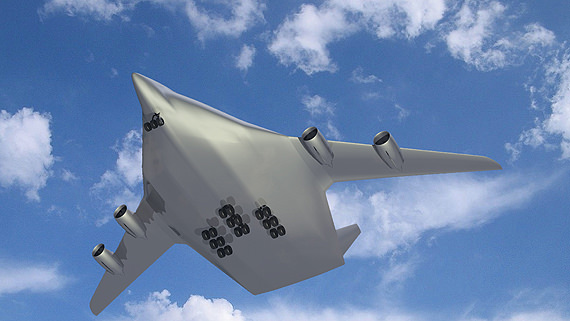

Will aircraft of the future look something like this? Project NACRE (New Aircraft Concepts Research) has this wide-body aircraft in mind for future flyers, designed for long-haul flights and able to accommodate up to 750 passengers. Measuring 65 meters long, 19 meters high with a wingspan of nearly 100 meters, the maximum take-off weight of the simulated flying wing is roughly 700 tons. The German Aerospace Center (Deutsches Zentrum für Luft- und Raumfahrt; DLR) has been performing flight tests to simulate and study the flight characteristics of large ‘flying wing’ configurations to prepare for future aircraft designs, using special airplane called ATTAS (Advanced Technologies Testing Aircraft System) research aircraft that has special software and hardware that can mimic the flight characteristics and performance of an entirely different aircraft.

What are some other future airplane concepts?

Airbus has this concept in mind – called a fantasy plane – that could be more fuel efficient because of its long, curled wings, a U-shaped tail, and a lightweight body. This could be the way planes look in 2030, Airbus says, and will have advanced interior systems, and be much quieter than current aircraft.

This supersonic aircraft concept by Boeing is nicknamed Icon II has V-tails and upper surface engines, and can carry 120 passengers in a two-class, single-aisle interior, and can cruise at Mach 1.6 to Mach 1.8 with a range of about 5,000 nautical miles.

Another concept from Boeing is the SUGAR Volt – which includes an electric battery gas turbine hybrid propulsion system – can reduce fuel burn by more than 70 percent and total energy use by 55 percent. This fuel burn reduction and the “greening” of the electrical power grid can greatly reduce emissions of life cycle carbon dioxide and nitrous oxide. Hybrid electric propulsion also has the potential to shorten takeoff distance and reduce noise.

This one is called the SmartFish, and utilizes a “lifting body” design, which means that the entire aircraft works to provide lift, rather than just the wings. The concept for this plane is a slender shape and composite material construction, which means less drag, and thus less thrust required for flight. The wing and fuselage form one integrated, futuristic-looking design. This plane can fly without slats, flaps, or spoilers, meaning increased fuel efficiency. See more on the SmartFish website.

Those are just a few concepts being tested and designed for the future of flight. You can read more about NASA’s work on the future of aeronautics here.

NASA’s Ames Director Announces “100 Year Starship”

[/caption]

The Director of NASA’s Ames Center, Pete Worden has announced an initiative to move space flight to the next level. This plan, dubbed the “Hundred Year Starship,” has received $100,000 from NASA and $ 1 million from the Defense Advanced Research Projects Agency (DARPA). He made his announcement on Oct. 16. Worden is also hoping to include wealthy investors in the project. NASA has yet to provide any official details on the project.

Worden also has expressed his belief that the space agency was now directed toward settling other planets. However, given the fact that the agency has been redirected toward supporting commercial space firms, how this will be achieved has yet to be detailed. Details that have been given have been vague and in some cases contradictory.

The Ames Director went on to expound how these efforts will seek to emulate the fictional starships seen on the television show Star Trek. He stated that the public could expect to see the first prototype of a new propulsion system within the next few years. Given that NASA’s FY 2011 Budget has had to be revised and has yet to go through Appropriations, this time estimate may be overly-optimistic.

One of the ideas being proposed is a microwave thermal propulsion system. This form of propulsion would eliminate the massive amount of fuel required to send crafts into orbit. The power would be “beamed” to the space craft. Either a laser or microwave emitter would heat the propellant, thus sending the vehicle aloft. This technology has been around for some time, but has yet to be actually applied in a real-world vehicle.

The project is run by Dr. Kevin L.G. Parkin who described it in his PhD thesis and invented the equipment used. Along with him are David Murakami and Creon Levit. One of the previous workers on the program went on to found his own company in the hopes of commercializing the technology used.

For Worden, the first locations that man should visit utilizing this revolutionary technology would not be the moon or even Mars. Rather he suggests that we should visit the red planet’s moons, Phobos and Deimos. Worden believes that astronauts can be sent to Mars by 2030 for around $10 billion – but only one way. The strategy appears to resemble the ‘Faster-Better-Cheaper’ craze promoted by then-NASA Administrator Dan Goldin during the 1990s.

DARPA is a branch of the U.S. Department of Defense whose purview is the development of new technology to be used by the U.S. military. Some previous efforts that the agency has undertaken include the first hypertext system, as well as other computer-related developments that are used everyday. DARPA has worked on space-related projects before, working on light-weight satellites (LIGHTSAT), the X-37 space plane, the FALCON Hypersonic Cruise Vehicle (HCV) and a number of other programs.

Source: Kurzweil

Astronomy: The Next Generation

In some respects, the field of astronomy has been a rapidly changing one. New advances in technology have allowed for exploration of new spectral regimes, new methods of image acquisition, new methods of simulation, and more. But in other respects, we’re still doing the same thing we were 100 years ago. We take images, look to see how they’ve changed. We break light into its different colors, looking for emission and absorption. The fact that we can do it faster and to further distances has revolutionized our understanding, but not the basal methodology.

But recently, the field has begun to change. The days of the lone astronomer at the eyepiece are already gone. Data is being taken faster than it can be processed, stored in easily accessible ways, and massive international teams of astronomers work together. At the recent International Astronomers Meeting in Rio de Janeiro, astronomer Ray Norris of Australia’s Commonwealth Scientific and Industrial Research Organization (CSIRO) discussed these changes, how far they can go, what we might learn, and what we might lose.

Observatories

One of the ways astronomers have long changed the field is by collecting more light, allowing them to peer deeper into space. This has required telescopes with greater light gathering power and subsequently, larger diameters. These larger telescopes also offer the benefit of improved resolution so the benefits are clear. As such, telescopes in the planning stages have names indicative of immense sizes. The ESO’s “Over Whelmingly Large Telescope” (OWL), the “Extremely Large Array” (ELA), and “Square Kilometer Array” (SKA) are all massive telescopes costing billions of dollars and involving resources from numerous nations.

But as sizes soar, so too does the cost. Already, observatories are straining budgets, especially in the wake of a global recession. Norris states, “To build even bigger telescopes in twenty years time will cost a significant fraction of a nation’s wealth, and it is unlikely that any nation, or group of nations, will set a sufficiently high priority on astronomy to fund such an instrument. So astronomy may be reaching the maximum size of telescope that can reasonably be built.”

Thus, instead of the fixation on light gathering power and resolution, Norris suggests that astronomers will need to explore new areas of potential discovery. Historically, major discoveries have been made in this manner. The discovery of Gamma-Ray Bursts occurred when our observational regime was expanded into the high energy range. However, the spectral range is pretty well covered currently, but other domains still have a large potential for exploration. For instance, as CCDs were developed, the exposure time for images were shortened and new classes of variable stars were discovered. Even shorter duration exposures have created the field of asteroseismology. With advances in detector technology, this lower boundary could be pushed even further. On the other end, the stockpiling of images over long times can allow astronomers to explore the history of single objects in greater detail than ever before.

Data Access

In recent years, many of these changes have been pushed forward by large survey programs like the 2 Micron All Sky Survey (2MASS) and the All Sky Automated Survey (ASAS) (just to name two of the numerous large scale surveys). With these large stores of pre-collected data, astronomers are able to access astronomical data in a new way. Instead of proposing telescope time and then hoping their project is approved, astronomers are having increased and unfettered access to data. Norris proposes that, should this trend continue, the next generation of astronomers may do vast amounts of work without even directly visiting an observatory or planning an observing run. Instead, data will be culled from sources like the Virtual Observatory.

Of course, there will still be a need for deeper and more specialized data. In this respect, physical observatories will still see use. Already, much of the data taken from even targeted observing runs is making it into the astronomical public domain. While the teams that design projects still get first pass on data, many observatories release the data for free use after an allotted time. In many cases, this has led to another team picking up the data and discovering something the original team had missed. As Norris puts it, “much astronomical discovery occurs after the data are released to other groups, who are able to add value to the data by combining it with data, models, or ideas which may not have been accessible to the instrument designers.”

As such, Nelson recommends encouraging astronomers to contribute data to this way. Often a research career is built on numbers of publications. However, this runs the risk of punishing those that spend large amounts of time on a single project which only produces a small amount of publication. Instead, Nelson suggests a system by which astronomers would also earn recognition by the amount of data they’ve helped release into the community as this also increases the collective knowledge.

Data Processing

Since there is a clear trend towards automated data taking, it is quite natural that much of the initial data processing can be as well. Before images are suitable for astronomical research, the images must be cleaned for noise and calibrated. Many techniques require further processing that is often tedious. I myself have experienced this as much of a ten week summer internship I attended, involved the repetitive task of fitting profiles to the point-spread function of stars for dozens of images, and then manually rejecting stars that were flawed in some way (such as being too near the edge of the frame and partially chopped off).

While this is often a valuable experience that teaches budding astronomers the reasoning behind processes, it can certainly be expedited by automated routines. Indeed, many techniques astronomers use for these tasks are ones they learned early in their careers and may well be out of date. As such, automated processing routines could be programmed to employ the current best practices to allow for the best possible data.

But this method is not without its own perils. In such an instance, new discoveries may be passed up. Significantly unusual results may be interpreted by an algorithm as a flaw in the instrumentation or a gamma ray strike and rejected instead of identified as a novel event that warrants further consideration. Additionally, image processing techniques can still contain artifacts from the techniques themselves. Should astronomers not be at least somewhat familiar with the techniques and their pitfalls, they may interpret artificial results as a discovery.

Data Mining

With the vast increase in data being generated, astronomers will need new tools to explore it. Already, there has been efforts to tag data with appropriate identifiers with programs like Galaxy Zoo. Once such data is processed and sorted, astronomers will quickly be able to compare objects of interest at their computers whereas previously observing runs would be planned. As Norris explains, “The expertise that now goes into planning an observation will instead be devoted to planning a foray into the databases.” During my undergraduate coursework (ending 2008, so still recent), astronomy majors were only required to take a single course in computer programming. If Norris’ predictions are correct, the courses students like me took in observational techniques (which still contained some work involving film photography), will likely be replaced with more programming as well as database administration.

Once organized, astronomers will be able to quickly compare populations of objects on scales never before seen. Additionally, by easily accessing observations from multiple wavelength regimes they will be able to get a more comprehensive understanding of objects. Currently, astronomers tend to concentrate in one or two ranges of spectra. But with access to so much more data, this will force astronomers to diversify further or work collaboratively.

Conclusions

With all the potential for advancement, Norris concludes that we may be entering a new Golden Age of astronomy. Discoveries will come faster than ever since data is so readily available. He speculates that PhD candidates will be doing cutting edge research shortly after beginning their programs. I question why advanced undergraduates and informed laymen wouldn’t as well.

Yet for all the possibilities, the easy access to data will attract the crackpots too. Already, incompetent frauds swarm journals looking for quotes to mine. How much worse will it be when they can point to the source material and their bizarre analysis to justify their nonsense? To combat this, astronomers (as all scientists) will need to improve their public outreach programs and prepare the public for the discoveries to come.

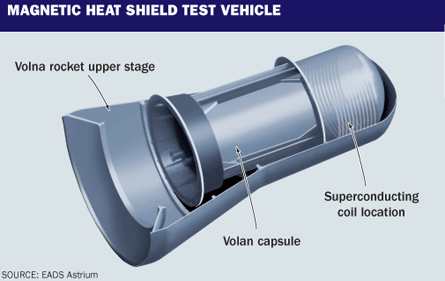

The Next Generation of Heat Shield: Magnetic

Heat shields are an important part of any space vehicle that re-enters the Earth’s atmosphere. The next generation of heat shields to protect astronauts and payloads on their re-entry into the Earth’s atmosphere may use superconducting magnets to deflect the plasma that forms in front of spacecraft as they travel at high speeds in the air. The first test of such a heat shield could happen as early as ten years from now, and the basic technology is already in development.

Traditional heat shields use the process of ablation to disperse heat away from the capsule. Basically, the material that covers the outside of the capsule gets worn away as it is heated up, taking the heat with it. The space shuttle uses tough insulated tiles. A magnetic heat shield would be lighter and much easier to re-use, eliminating the cost of re-covering the outside of a craft after each entry.

A magnetic heat shield would use a superconductive magnetic coil to create a very strong magnetic field near the leading edge of the vehicle. This magnetic field would deflect the superhot plasma that forms at the extreme temperatures cause by friction near the surface of an object entering the Earth’s atmosphere. This would reduce or completely eliminate the need for insulative or ablative materials to cover the craft.

Problems with the heat shield on a spacecraft can be disastrous, even fatal; the Columbia disaster was due largely to the failure of insulative tiles on the shuttle, due to damage incurred during launch. Such a system might be more reliable and less prone to damage than current heat shield technology.

At the European air and space conference 2009 in Manchester in October, Detlev Konigorski from the private aerospace firm Astrium EADS said that with the cooperation of German aerospace center DLR and the European Space Agency, Astrium was developing a potential magnetic heat shield for testing within the next few years.

The initial test vehicle would be launched from a submarine aboard a Russian Volna rocket on a suborbital trajectory, and land in the Russian Kamchatka region. A Russian Volan escape capsule will be outfitted with the device, and the re-entry trajectory will take it up to speeds near Mach 21.

Though the scientists are currently testing the capabilities of a superconducting coil to perform this feat, there is the challenge of calculating changes to the trajectory of a test vehicle, because the air will be deflected away much more than with current heat shield technology. The ionized gases surrounding a capsule using a magnetic heat shield would also put a wrench in the current technique of using radio signals for telemetry data. Of course, there are a long list of other technical challenges to overcome before the testing will happen, so don’t expect to see the Orion crew vehicle outfitted with one!

Source: Physorg