To say NASA has been undergoing some massive administrative changes lately is a huge understatement. One of the more concerning ones, according to a new paper at the 57th Lunar and Planetary Science Conference by Ari Koeppel and Casey Dreier of the Planetary Society, is the trend towards the Silicon Valley mindset of “move fast and break things” - which they argue doesn’t work very well when it comes to producing valuable science.

To do so, they analyzed 90 science missions launched between 1994 and 2023 that focused on Planetary Science, Heliophysics, and Astrophysics. To put it bluntly, they found that relying primarily on very-low cost (i.e. <$100M total cost) missions is a flawed strategy and will not generate high-impact science.

Their data focused on the number of “high-impact” papers that resulted from very low cost missions. By their definition, a “high-impact paper” is one with over 100 citations - meaning it’s had a meaningful impact on the discourse of its specific scientific field. According to the research, literally no high-impact science paper resulted from a planetary science mission that cost less than $100M, and only 0.02% of high-impact papers in astrophysics came from similarly budgeted missions in that category. Heliophysics research in this category performed better at 7.9% of the total high-impact papers for that division.

*Table of the percentage of “high-impact” papers for each mission type and cost category. Credit - A.H.D. Koeppel & C. Dreier*

*Table of the percentage of “high-impact” papers for each mission type and cost category. Credit - A.H.D. Koeppel & C. Dreier*

The lack of planetary science data is probably due to the fact that no very-low cost planetary science mission has actually worked. It’s admittedly hard to write an effective paper on the science of a mission that didn’t collect any data. But what about the impact of time on these numbers? Don’t older missions have more time to accrue more citations and therefore score better on this metric?

In short, yes, that is a weakness of most bibliometric research. However, the authors recognized this problem and even pointed out examples in the paper - such as Perseverance, which haven’t necessarily had enough time to get to 100 citations. But to truly account for this time-bias, they investigated what they called “time-domain sensitivities by looking at production rates” of papers. In other words they put the total number of “high impact” papers in the numerator and divided it by the total number of years since the start of science operations.

Dividing a zero by any number is still zero, though, so even this metric didn’t look much better for the very low cost missions. If these low-cost missions keep failing, this metric will not get any better. Another metric they looked at was the “time-to-science” - how long it took after a mission began to get its first major “hit”. According to the paper, “Very-low-cost missions can take years to publish a highly cited result, largely negating their rapid development advantage”. In other words, the “move fast” model so prevalent in Silicon Valley doesn’t really work when the scientific community is slow to follow up on the research it produces.

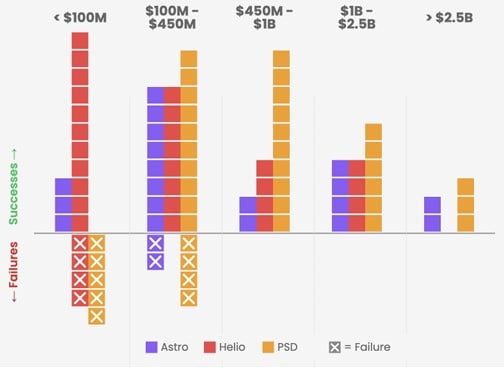

*Graph showcasing the different missions in the different cost and science categories, including the failures. Credit - A.H.D. Koeppel & C. Dreier*

*Graph showcasing the different missions in the different cost and science categories, including the failures. Credit - A.H.D. Koeppel & C. Dreier*

So what is causing these difficulties for very-low-cost missions? There are two main explanations - first is, simply, that more of them fail. Nine of the missions that cost less than $100M didn’t produce any science at all, whereas the only other category with any failures was the $100-450M range, which produced only six failures (and many more successes) than its lower cost cousins. None of the missions costing more than $450M failed at all. But that’s only part of the reason.

The authors’ main point is that low cost missions are quite simply constrained on the actual cutting-edge science they are able to perform. These missions are severely restricted in their size, complexity, and the capability of the scientific instruments on board. In other words, they’re simply not as good as larger missions at collecting quality data, whether they actually get to the phase of data collection or not. As the authors put it, very-low-cost missions would need to see a “dramatic change in the quality, reliability, and capability of low-cost missions and instrumentation” in order for them to make a real-world impact at the same level as larger missions.

That flies directly in the face of Project Athena, the NASA administration’s pivot to lower cost missions. You can’t iterate yourself to a larger, more capable sensor or a physically larger housing for a larger sunshield. These things can’t be modularized and iterated, at least at this point in our technological development. So while running ten smaller missions for the cost of one large “flagship” might seem like a good trade-off, the paper makes the point that it actually might not be because the smaller ones will never have the same capabilities as the larger one, even collectively.

Fraser talks about the rise of giant space telescopes.There does appear to be a sweet spot, though. Mid-tier missions (i.e., those between $250–750M in budget) seem to provide the minimum “time-to-science,” beating even smaller missions, with an average of just six years from the project formulation to the publication of a major impact paper.

Whether this advice will be taken in by the NASA administration remains to be seen. The “move fast and break things” mentality has been deeply ingrained in the culture of many companies in the last few decades. But that doesn’t mean it's what's best for space exploration. And we might be doing ourselves a disservice by pivoting to that while ignoring the benefits of the larger missions that provide the most comprehensive breakthroughs in science so far.

Learn More:

A.H.D. Koeppel & C. Dreier - ASSESSING SCIENTIFIC PRODUCTIVITY OF NASA’S SPACE MISSIONS BY COST

UT - How To Aerobrake a Mission To Uranus On the Cheap

UT - Can Philanthropy Fast-Track a Flagship Telescope?

UT - The Sun Reaches Solar Maximum in 2032. A new NASA Flagship Mission Could Give Us a Perfect View

Universe Today

Universe Today