An ultracold vacuum chamber ran a simulation of the early universe and came up with some interesting findings about how the environment looked shortly after the Big Bang occurred.

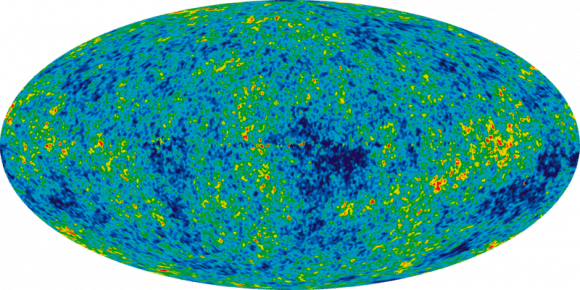

Specifically, the atoms clustered in patterns similar to the cosmic microwave background — believed to be the echo of the intense burst that formed the beginning of the universe. Scientists have mapped the CMB at progressively higher resolution using several telescopes, but this experiment is the first of its kind to show how structure evolved at the beginning of time as we understand it.

The Big Bang theory (not to be confused with the popular television show) is intended to describe the universe’s evolution. While many pundits say it shows how the universe came “from nothing”, the concordance cosmological model that describes the theory says nothing about where the universe came from. Instead, it focuses on applying two big physics models (general relativity and the standard model of particle physics). Read more about the Big Bang here.

CMB is, more simply stated, electromagnetic radiation that fills the Universe. Scientists believe it shows an echo of a time when the Universe was much smaller, hotter and denser, and filled to the brim with hydrogen plasma. The plasma and radiation surrounding it gradually cooled as the Universe grew bigger. (More information on the CMB is here.) At one time, the glow from the plasma was so dense that the Universe was opaque, but transparency increased as stable atoms formed. But the leftovers are still visible in the microwave range.

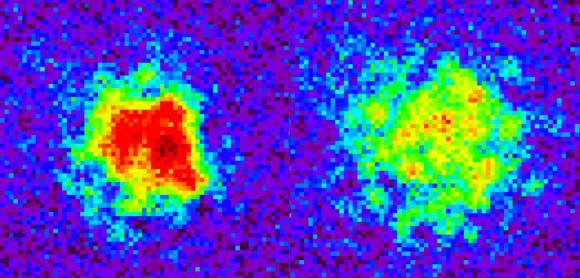

The new research used ultracold cesium atoms in a vacuum chamber at the University of Chicago. When the team cooled these atoms to a billionth of a degree above absolute zero (which is -459.67 degrees Fahrenheit, or -273.15 degrees Celsius), the structures they saw appeared very similar to the CMB.

By quenching the 10,000 atoms in the experiment to control how strongly the atoms interact with each other, they were able to generate a phenomenon that is, very roughly speaking, similar to how sound waves move in air.

“At this ultracold temperature, atoms get excited collectively,” stated Cheng Chin, a physics researcher at the University of Chicago who participated in the research. This phenomenon was first described by Russian physicist Andrei Sakharov, and is known as Sakharov acoustic oscillations.

So why is the experiment important? It allows us to more closely track what happened after the Big Bang.

The CMB is simply a frozen moment of time and is not evolving, requiring researchers to delve into the lab to figure out what is happening.

“In our simulation we can actually monitor the entire evolution of the Sakharov oscillations,” said Chen-Lung Hung, who led the research, earned his Ph.D. in 2011 at the University of Chicago, and is now at the California Institute of Technology.

Both Hung and Chin plan to do more work with the ultracold atoms. Future research directions could include things such as how black holes work, or how galaxies were formed.

You can read the published research online on Science‘s website.

Source: University of Chicago

Here’s the relevant paper (PDF): From Cosmology to Cold Atoms: Observation of Sakharov Oscillations in Quenched Atomic Superfluids.

Thanks for the summary but you left out the coolest part of the story! You should mention that the entire simulation was run on NASA’s supercomputer Pleiades, ranked 7th of the world’s top 500 supercomputers. I don’t know if anyone has read Mark Solomon’s books, but according to him, quantum computers will soon be able to logarithmically increase computing power relative to today’s standards. He states that someday entire universes will be run on computers, which would then lend evidence that we ourselves live in a simulated universe. Across many universe simulations there would be some that produce many more universe simulations (which would continue on). He theorizes that there has been an evolution of universes, with better physics in universes over time to better support advanced civilizations, who would then be able to run more simulation themselves. Found a brief synopsis.

https://en.wikipedia.org/wiki/Mark_J._Solomon

There’s a very good Wired article along these lines. I’ve certainly considered that we are living in a simulation, and therefore a ‘creator’ of that simulation is also possible. http://www.wired.com/wired/archive/10.12/holytech.html

Good point. And one problem with this, as stated in the On Computer Simulated Universes book, is that you get into the problem of an infinite regress. Who created the creator? Who created the creator’s creator, etc.

This is the first time I’ve seen the real resource dependence of quantum computing been described outside of computer scientist Scott Aaronson’s blogging. Aaronson notes that a sublinear increase won’t give us an increased ability to tackle the exponential resource This is pretty much the first time I’ve seen the real resource dependence of quantum computing (QC) been described outside of computer scientist (CS) Scott Aaronson’s blogging.

Aaronson notes though that a sublinear increase won’t give us an increased ability to tackle the exponential resource requirements of computationally hard problems. Considering the increased cost/bit of QC, it may not make any difference in the world of computing. (Except for some specific algorithms where QC is know to ace the test.)

As for the simulation hypothesis, it is nice to speculate but the question is if it is empirically fruitful. The more realistic Boltzmann Brains (which we do know the physics for) has a problem to be taken serious I think, despite efforts from CS Aaronson, physicists Carroll & Bousso, and others.

Personally I don’t see how it is supposed to work. Quantum mechancics assures us we will always be able in principle to distinguish between our world and a simulated world as it minimizes variables (and parameters), the so called “no hidden variables” prediction.

And I have seen papers in arxiv that claims this outcome in practice. They are elaborated though. My own idea is that increasing precision and decreasing time would make a simulation eventually break.

The LHC does that work for the electroweak (EW) sector. I.e. we already know, pretty much today and for sure before 2020, that such simulations can’t be performed with mechanisms based on Standard Model particles. The experiments scour the whole EW sector for deviations. A simulation would show up as interaction remnants that wasn’t SM predictions, unless I am mistaken.

So I would say that right know the simulation physics may work in principle, but it asks for new physics (new particles) that we don’t know exists.This is pretty much the first time I’ve seen the real resource dependence of quantum computing (QC) been described outside of computer scientist (CS) Scott Aaronson’s blogging.

Aaronson notes though that a sublinear increase won’t give us an increased ability to tackle the exponential resource requirements of computationally hard problems. Considering the increased cost/bit of QC, it may not make any difference in the world of computing. (Except for some specific algorithms where QC is know to ace the test.)

As for the simulation hypothesis, it is nice to speculate but the question is if it is empirically fruitful. The more realistic Boltzmann Brains (which we do know the physics for) has a problem to be taken serious I think, despite efforts from CS Aaronson, physicists Carroll & Bousso, and others.

Personally I don’t see how it is supposed to work. Quantum mechancics assures us we will always be able in principle to distinguish between our world and a simulated world as it minimizes variables (and parameters), the so called “no hidden variables” prediction.

And I have seen papers in arxiv that claims this outcome in practice. They are elaborated though. My own idea is that increasing precision and decreasing time would make a simulation eventually break.

The LHC does that work for the electroweak (EW) sector. I.e. we already know, pretty much today and for sure before 2020, that such simulations can’t be performed with mechanisms based on Standard Model particles. The experiments scour the whole EW sector for deviations. A simulation would show up as interaction remnants that wasn’t SM predictions, unless I am mistaken.*

So I would say that right know the simulation physics may work in principle, but it asks for new physics (new particles) that we don’t know exists.

* Assuming you haven’t a Matrix style policed world that captures attempts to take the red pill.

I don’t think anyone is proposing a Matrix-style world (I hope not anyway). I think it is just an attempt to follow the logic to where it takes you. When considering Dr. Bostrom’s argument (along with Dr. Solomon’s expanded thoughts), there is a plausibility (from at least a philosophical perspective) that is undeniable. But yes, I completely agree. Eventually we are going to need some empirical studies that would point one way or the other. And it is my understanding that these studies are starting up or have been running (e.g., out of University of Washington). The key here is that we have always assumed that the question, “Do we live in a simulated universe?” was unfalsifiable. But it appears that this view might just be a mere limitation on our ability to think through these difficult questions in a more creative manner. It will be interesting to see where the Simulation Hypothesis is after five or ten years.

It is always good to have independent tests. But this is a misunderstanding:

The CMB is a relict, but it is a dynamic fossil. Its patterns evolves for a local observer.

What a local observer sees is an image of a surface (the cosmic horizon). As the universe ages and the cosmic horizon expands, the image will change. The redshift will change over time obviously.

But more importantly an observer will see different patches of the cloud with different thermal patterns, because the horizon distance has shifted. We see further into the immense cloud, because the surface just seen has cooled down from plasma to gas, the border of which is what releases free photons.*

This is described by a researcher here:

“The answer to this question is present in Stuart Lange’s senior thesis and I think it’s really cool. The answer is that, if we wait billions of years, the CMB will have completely changed. You can see a simulated film of this below (note the scale in years).

This might then sound like it is just a cute curiosity, nice but unobservable. But it isn’t quite. Using very ambitious estimates, Lange estimates in his thesis that even in a timeframe of the order of 100 years the minute differences that will have occurred might just be detectable. If they were, we could then do real-time cosmology by calculating the statistical properties of this difference map. Those will be cool days.”

[My bold]

So we won’t make use of it, but future cosmologists can compare theoretical predictions and this toy model with observations.

Incidentally, real-time cosmology would be analogous as if we could observe traces of inhabitation on other planets. Comparing the statistical properties of projected early geo- and biochemistries could allow for real-time astrobiology, how abiogenesis conditions changes over cosmological time.

[It is worthwhile to click over and watch the simulation. One can see that the surface of the hot gas cloud boils as you would naively expect from similar surfaces (e.g. the Sun*).]

* The fast exponential behavior of the optical opacity is the same as for the Sun and other stars, so exactly as it gives the Sun a distinct surface the cloud will have one too.

This is what I consider the beautiful part: an original creator has always paradoxically existed. So all of existence is built on a paradox. Absurdity is normality. We influence our quantum reality with our feelings and intentions.