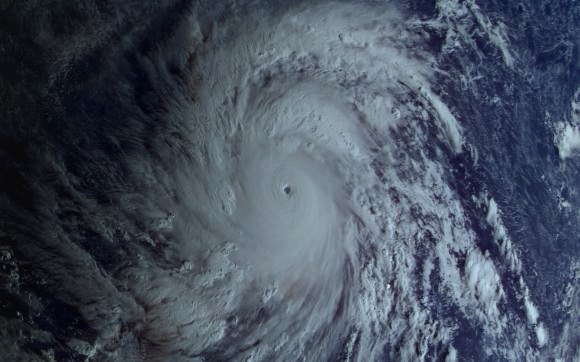

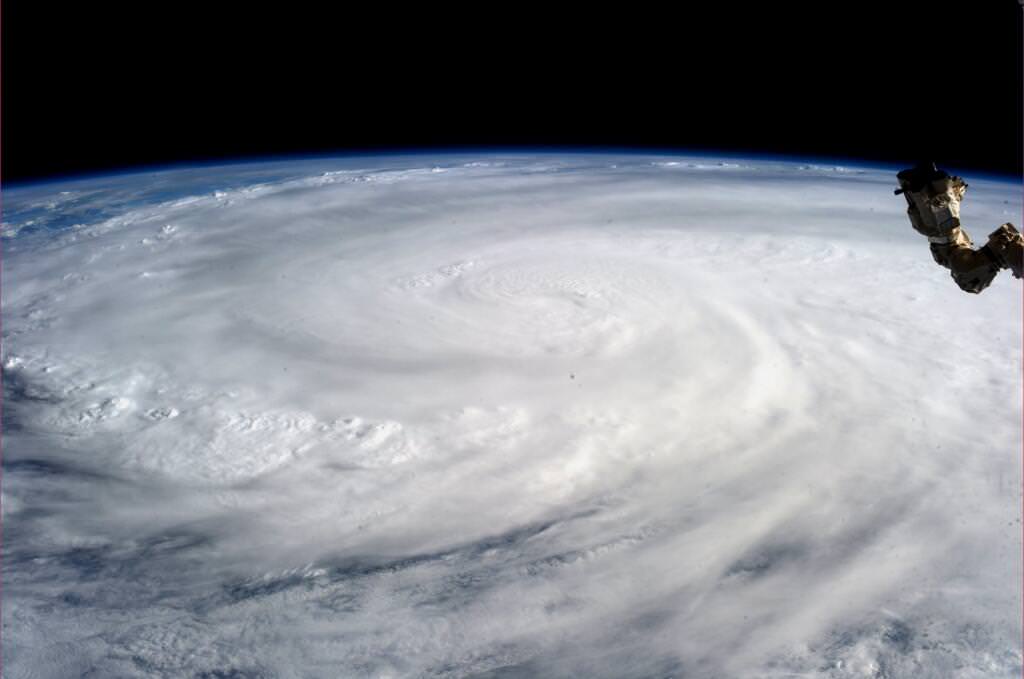

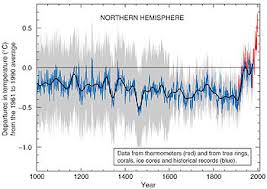

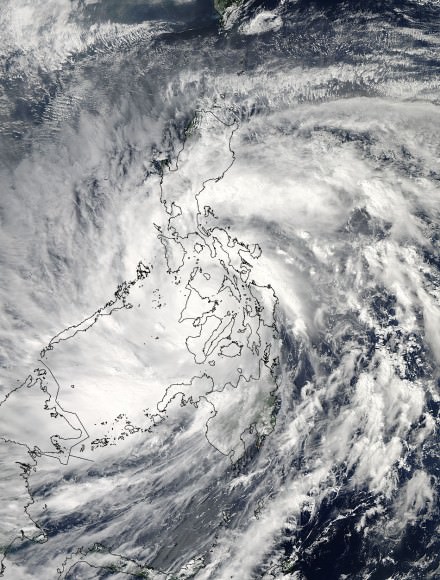

Super Typhoon Haiyan over the Philippines on November 9, 2013 as imaged from Earth orbit by NASA Astronaut Karen Nyberg aboard the International Space Station.Category 5 killer storm Haiyan stretches across the entire photo from about 250 miles (400 kilometer) altitude. Credit: NASA/Karen Nyberg

See more Super Typhoon Haiyan imagery and video below

[/caption]

NASA GODDARD SPACE FLIGHT CENTER, MARYLAND – Super Typhoon Haiyan smashed into the island nation of the Philippines, Friday, Nov. 8, with maximum sustained winds estimated at exceeding 195 MPH (315 kilometer per hour) by the U.S. Navy Joint Typhoon Warning Center – leaving an enormous region of catastrophic death and destruction in its terrible wake.

The Red Cross estimates over 1200 deaths so far. The final toll could be significantly higher. Local media reports today say bodies of men, women and children are now washing on shore.

The enormous scale of Super Typhoon Haiyan can be vividly seen in space imagery captured by NASA, ISRO and Russian satellites – as well as astronaut Karen Nyberg flying overhead on board the International Space Station (ISS); collected here.

Super Typhoon Haiyan is reported to be the largest and most powerful storm ever to make landfall in recorded human history.

Haiyan is classified as a Category 5 monster storm on the U.S. Saffir-Simpson scale.

It struck the central Philippines municipality of Guiuan at the southern tip of the province of Eastern Samar early Friday morning Nov. 8 at 20:45 UTC (4:45 am local time).

As Haiyan hit the central Philippines, NASA says wind gusts exceeded 235 mph (379 kilometers per hour).

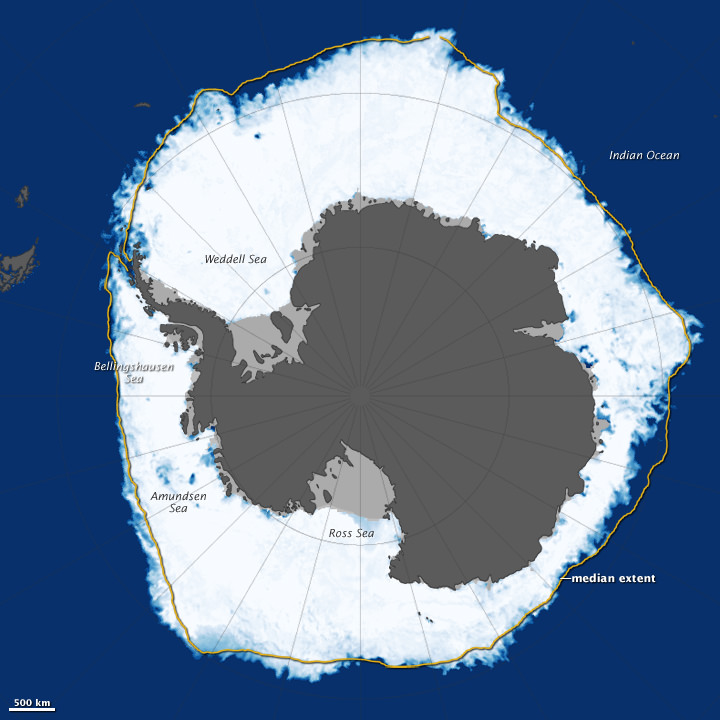

The high resolution imagery and precise measurements provided by the worlds constellation of Earth observing space satellites (including NASA, Roscosmos, ISRO, ESA, JAXA) are absolutely essential to tracking killer storms and providing significant advance warning to evacuate residents in affected areas to help minimize the death toll and damage.

More than 800,000 people were evacuated. The storm surge caused waves exceeding 30 feet (10 meters), mudslides and flash flooding.

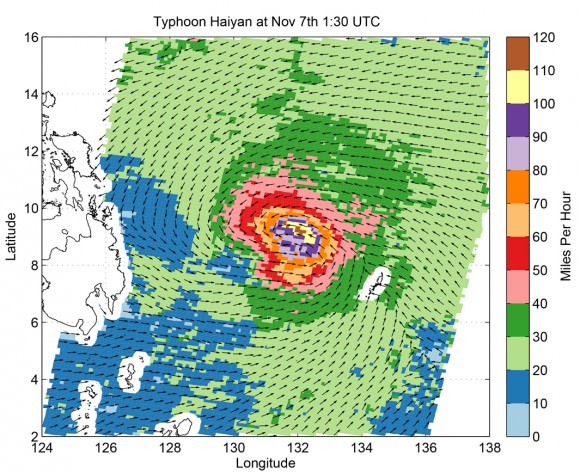

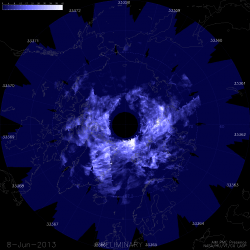

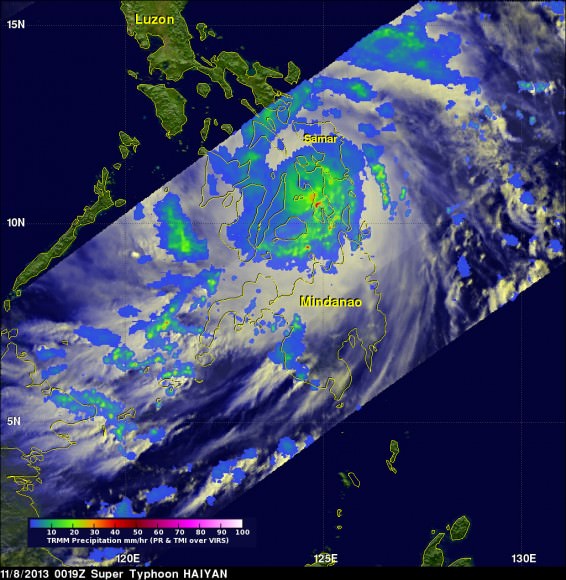

NASA’s Tropical Rainfall Measuring Mission (TRMM) satellite captured visible, microwave and infrared data on the storm just as it was crossing the island of Leyte in the central Philippines, reports NASA – see image below.

TRMM data from rain rates are measured by the TRMM Precipitation Radar (PR) and TRMM Microwave Imager (TMI) and combined with infrared (IR) data from the TRMM Visible Infrared Scanner (VIRS) by science teams working at NASA’s Goddard Space Flight Center in Greenbelt, Md.

Coincidentally NASA Goddard has just completed assembly of the next generation weather satellite Global Precipitation Measurement (GPM) observatory that replaces TRMM – and where I inspected the GPM satellite inside the Goddard clean room on Friday.

“GPM is a direct follow-up to NASA’s currently orbiting TRMM satellite,” Art Azarbarzin, GPM project manager, told Universe Today during my exclusive clean room inspection of the huge GPM satellite.

Credit: Ken Kremer/kenkremer.com

“TRMM is reaching the end of its usable lifetime. GPM launches in February 2014 and we hope it has some overlap with observations from TRMM.”

“The Global Precipitation Measurement (GPM) observatory will provide high resolution global measurements of rain and snow every 3 hours,” Dalia Kirschbaum, GPM research scientist, told me at Goddard.

GPM is equipped with advanced, higher resolution radar instruments. It is vital to continuing the TRMM measurements and will help provide improved forecasts and advance warning of extreme super storms like Hurricane Sandy and Super Typhoon Haiyan, Azarbarzin and Kirschbaum explained.

Video Caption: Super Typhoon Haiyan imaged on Nov 6 – 8, 2013 by the Russian Elektro-L satellite operating in geostationary orbit. Credit: Roscosmos via Vitaliy Egorov

The full magnitude of Haiyan’s destruction is just starting to be assessed as rescue teams reach the devastated areas where winds wantonly ripped apart homes, farms, factories, buildings and structures of every imaginable type vital to everyday human existence.

Typhoon Haiyan is moving westward and is expected to forcefully strike central Vietnam in a day or two. Mass evacuations are underway at this time