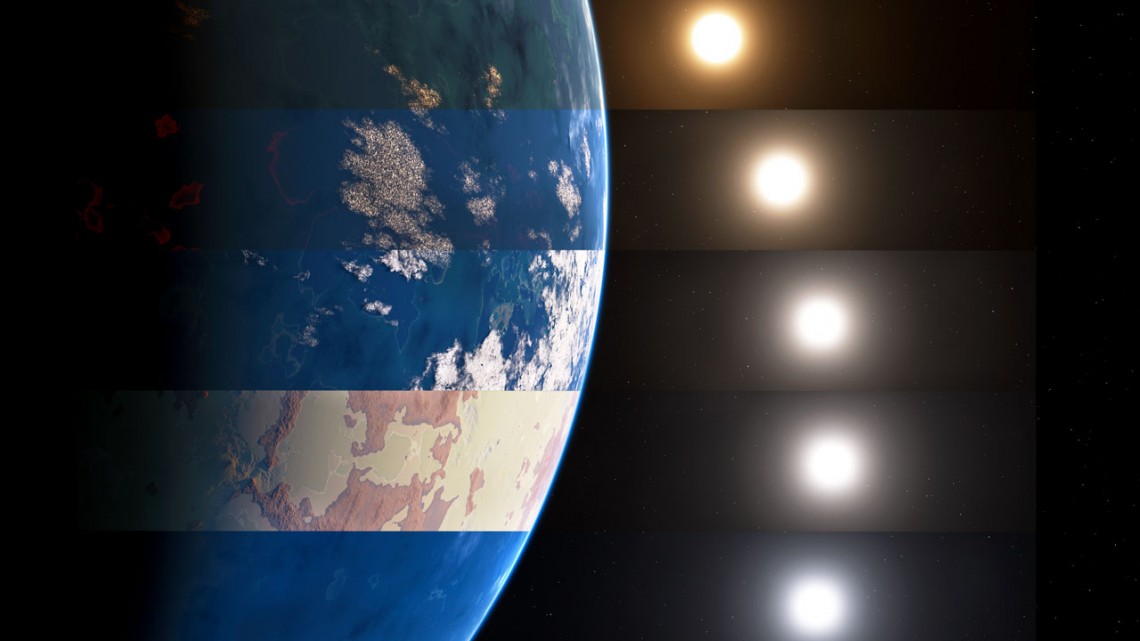

When the International Space Station (ISS) runs low on basic supplies – like food, water, and other necessities – they can be resupplied from Earth in a matter of hours. But when astronauts go the Moon for extended periods of time in the coming years, resupply missions will take much longer to get there. The same holds true for Mars, which can take months to get there while also being far more expensive.

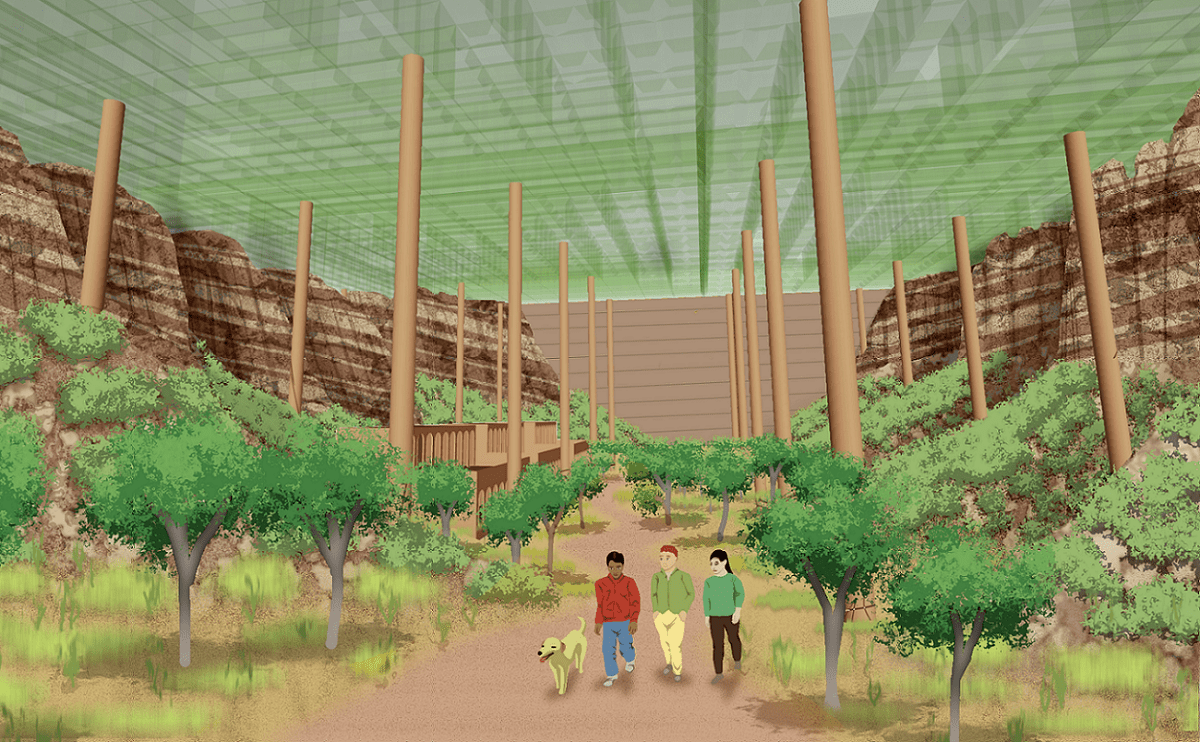

It’s little wonder then why NASA and other space agencies are looking to develop methods and technologies that will ensure that their astronauts have a degree of self-sufficiency. According to NASA-supported research conducted by Daniel Tompkins of Grow Mars and Anthony Muscatello (formerly of the NASA Kennedy Space Center), ISRU methods will benefit immensely from some input from nature.

Continue reading “Practical Ideas for Farming on the Moon and Mars”