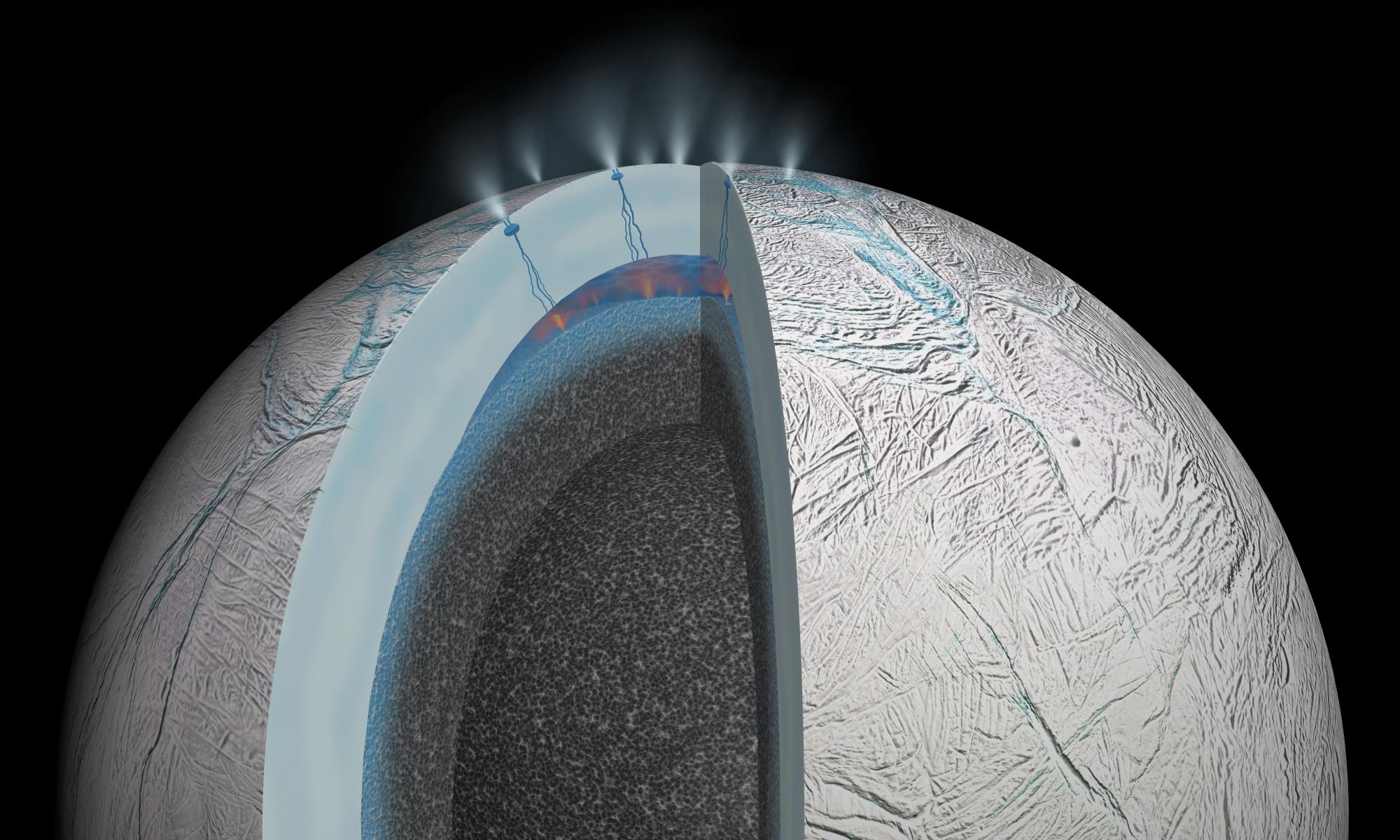

The joint NASA/ESA Cassini-Huygens mission revealed some amazing things about Saturn and its system of moons. In the thirteen years that it spent studying the system – before it plunged into Saturn’s atmosphere on September 15th, 2017 – it delivered the most compelling evidence to date of extra-terrestrial life. And years later, scientists are still poring over the data it gathered.

For instance, a team of German scientists recently examined data gathered by the Cassini orbiter around Enceladus’ southern polar region, where plume activity regularly sends jets of icy particles into space. What they found was evidence of organic signatures that could be the building blocks for amino acids, the very thing that life is made of! This latest evidence shows that life really could exist beneath Enceladus’ icy crust.

Continue reading “The Raw Materials for Amino Acids – Which are the Raw Materials for Life – Were Found in the Geysers Coming out of Enceladus”